Lightweight and Efficient Image Super-Resolution with Block State-based Recursive Network

Paper and Code

Nov 30, 2018

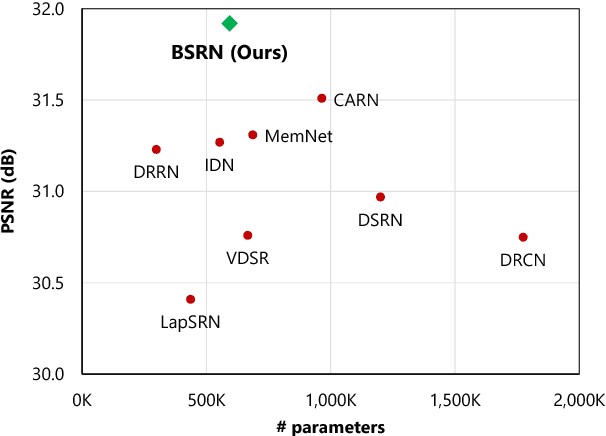

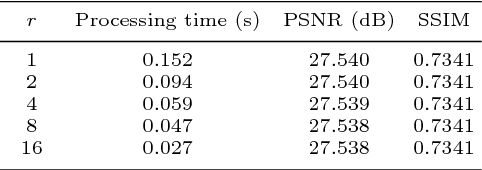

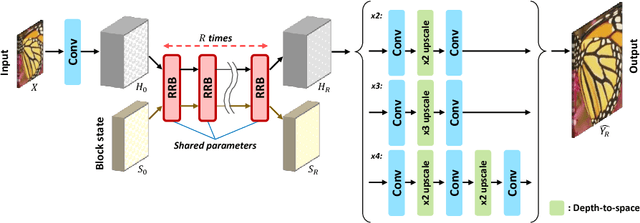

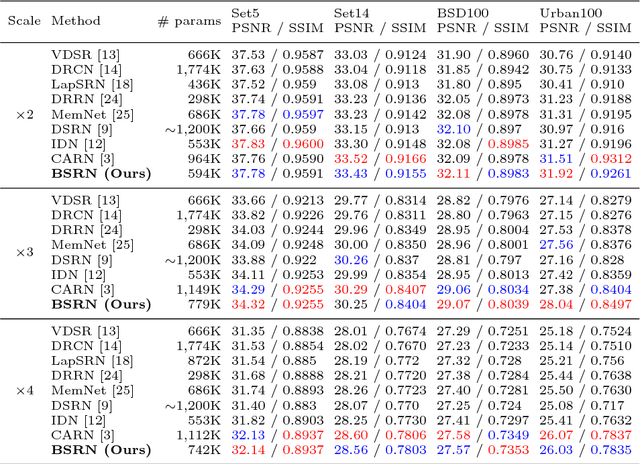

Recently, several deep learning-based image super-resolution methods have been developed by stacking massive numbers of layers. However, this leads too large model sizes and high computational complexities, thus some recursive parameter-sharing methods have been also proposed. Nevertheless, their designs do not properly utilize the potential of the recursive operation. In this paper, we propose a novel, lightweight, and efficient super-resolution method to maximize the usefulness of the recursive architecture, by introducing block state-based recursive network. By taking advantage of utilizing the block state, the recursive part of our model can easily track the status of the current image features. We show the benefits of the proposed method in terms of model size, speed, and efficiency. In addition, we show that our method outperforms the other state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge