Leveraging Implicit Expert Knowledge for Non-Circular Machine Learning in Sepsis Prediction

Paper and Code

Sep 20, 2019

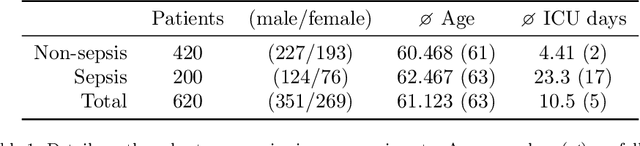

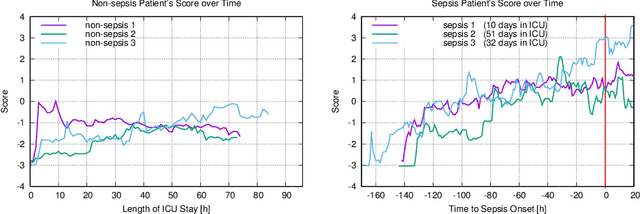

Sepsis is the leading cause of death in non-coronary intensive care units. Moreover, a delay of antibiotic treatment of patients with severe sepsis by only few hours is associated with increased mortality. This insight makes accurate models for early prediction of sepsis a key task in machine learning for healthcare. Previous approaches have achieved high AUROC by learning from electronic health records where sepsis labels were defined automatically following established clinical criteria. We argue that the practice of incorporating the clinical criteria that are used to automatically define ground truth sepsis labels as features of severity scoring models is inherently circular and compromises the validity of the proposed approaches. We propose to create an independent ground truth for sepsis research by exploiting implicit knowledge of clinical practitioners via an electronic questionnaire which records attending physicians' daily judgements of patients' sepsis status. We show that despite its small size, our dataset allows to achieve state-of-the-art AUROC scores. An inspection of learned weights for standardized features of the linear model lets us infer potentially surprising feature contributions and allows to interpret seemingly counterintuitive findings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge