Learning without feedback: Direct random target projection as a feedback-alignment algorithm with layerwise feedforward training

Paper and Code

Sep 03, 2019

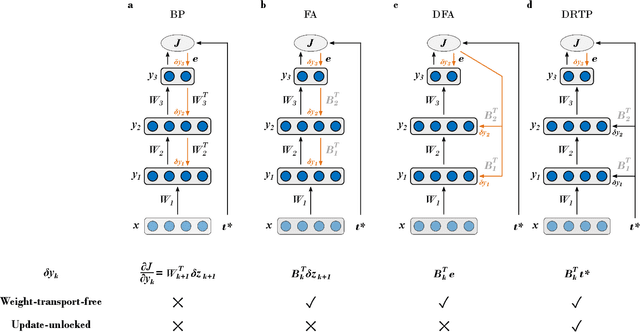

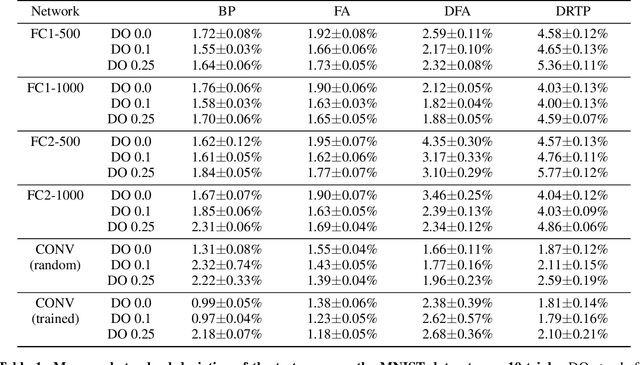

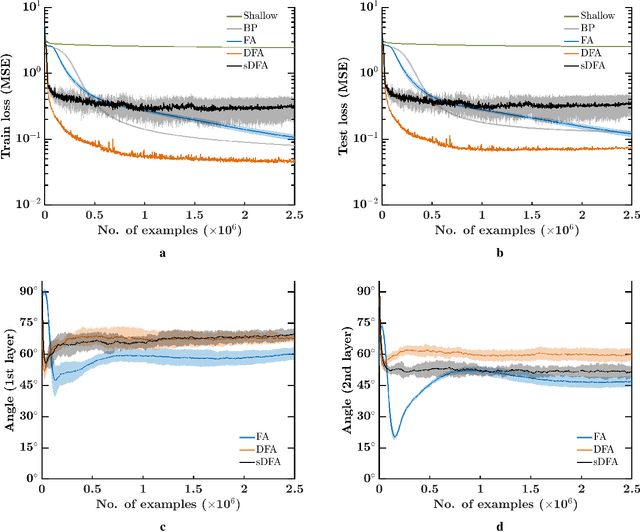

While the backpropagation of error algorithm allowed for a rapid rise in the development and deployment of artificial neural networks, two key issues currently preclude biological plausibility: (i) symmetry is required between forward and backward weights, which is known as the weight transport problem, and (ii) updates are locked before both the forward and backward passes have been completed. The feedback alignment (FA) algorithm uses fixed random feedback weights to release the weight transport problem. The direct feedback alignment (DFA) variation directly propagates the output error to each hidden layer through fixed random connectivity matrices. In this work, we show that using only the error sign is sufficient to maintain feedback alignment and to provide learning in the hidden layers. As in classification problems the error sign information is already contained in the target vector, using the latter as a proxy for the error brings three advantages: (i) it solves the weight transport problem by eliminating the requirement for an explicit feedback pathway, which also reduces the computational workload, (ii) it reduces memory requirements by removing update locking, allowing for weight updates to be computed in each layer independently without requiring a full forward pass, and (iii) it leads to a purely feedforward and low-cost algorithm that only requires a label-dependent random vector selection to estimate the layerwise loss gradients. Therefore, in this work, we propose the direct random target projection (DRTP) algorithm and demonstrate on the MNIST and CIFAR-10 datasets that, despite the absence of an explicit error feedback, DRTP performance can still lie close to the one of BP, FA and DFA. The low memory and computational cost of DRTP and its reliance only on layerwise feedforward computation make it suitable for deployment in adaptive edge computing devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge