Learning Optimal Power Flow: Worst-Case Guarantees for Neural Networks

Paper and Code

Jun 19, 2020

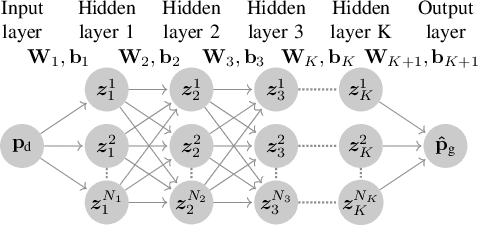

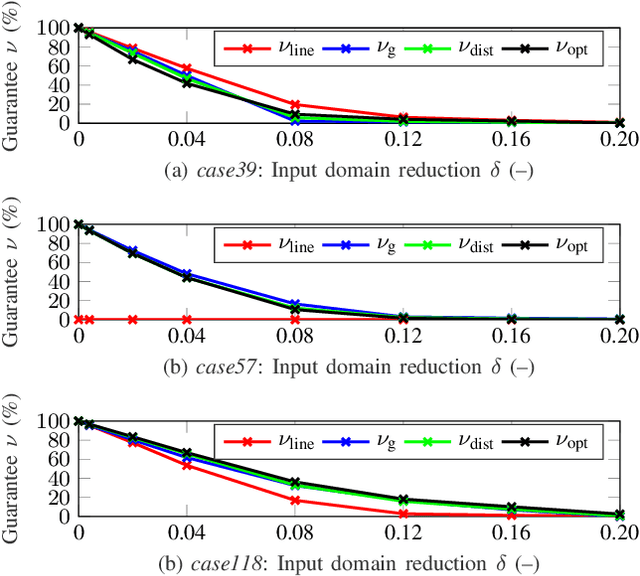

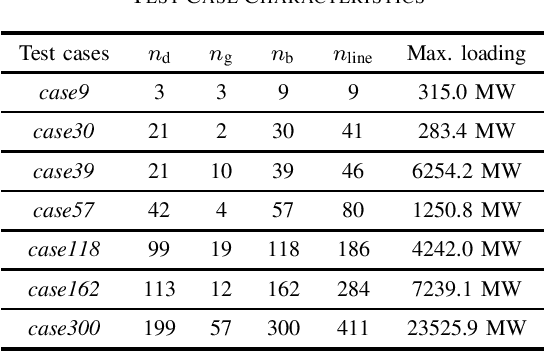

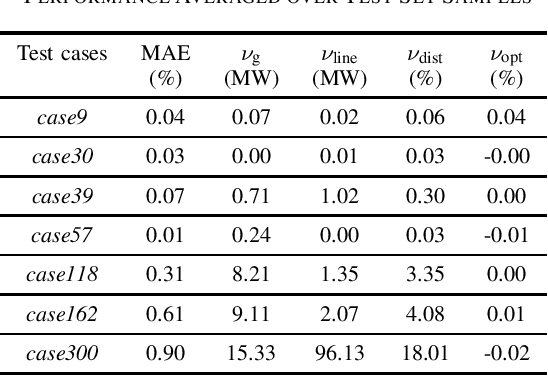

This paper introduces for the first time a framework to obtain provable worst-case guarantees for neural network performance, using learning for optimal power flow (OPF) problems as a guiding example. Neural networks have the potential to substantially reduce the computing time of OPF solutions. However, the lack of guarantees for their worst-case performance remains a major barrier for their adoption in practice. This work aims to remove this barrier. We formulate mixed-integer linear programs to obtain worst-case guarantees for neural network predictions related to (i) maximum constraint violations, (ii) maximum distances between predicted and optimal decision variables, and (iii) maximum sub-optimality. We demonstrate our methods on a range of PGLib-OPF networks up to 300 buses. We show that the worst-case guarantees can be up to one order of magnitude larger than the empirical lower bounds calculated with conventional methods. More importantly, we show that the worst-case predictions appear at the boundaries of the training input domain, and we demonstrate how we can systematically reduce the worst-case guarantees by training on a larger input domain than the domain they are evaluated on.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge