Learning Generalizable Physiological Representations from Large-scale Wearable Data

Paper and Code

Nov 09, 2020

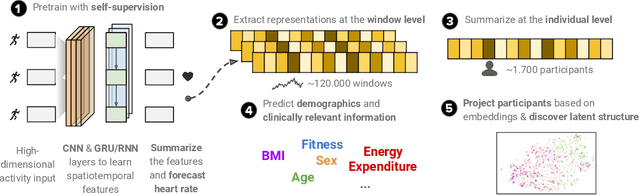

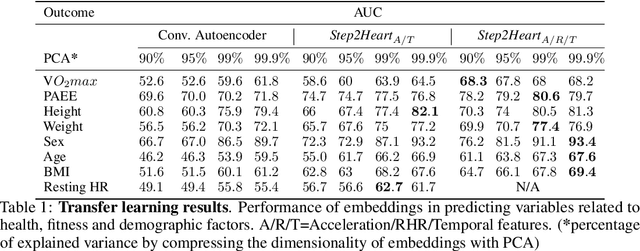

To date, research on sensor-equipped mobile devices has primarily focused on the purely supervised task of human activity recognition (walking, running, etc), demonstrating limited success in inferring high-level health outcomes from low-level signals, such as acceleration. Here, we present a novel self-supervised representation learning method using activity and heart rate (HR) signals without semantic labels. With a deep neural network, we set HR responses as the supervisory signal for the activity data, leveraging their underlying physiological relationship. We evaluate our model in the largest free-living combined-sensing dataset (comprising more than 280,000 hours of wrist accelerometer & wearable ECG data) and show that the resulting embeddings can generalize in various downstream tasks through transfer learning with linear classifiers, capturing physiologically meaningful, personalized information. For instance, they can be used to predict (higher than 70 AUC) variables associated with individuals' health, fitness and demographic characteristics, outperforming unsupervised autoencoders and common bio-markers. Overall, we propose the first multimodal self-supervised method for behavioral and physiological data with implications for large-scale health and lifestyle monitoring.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge