Learning Contact-aware CPG-based Locomotion in a Soft Snake Robot

Paper and Code

May 10, 2021

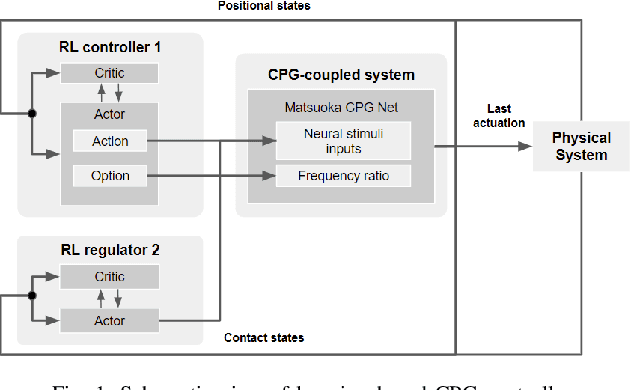

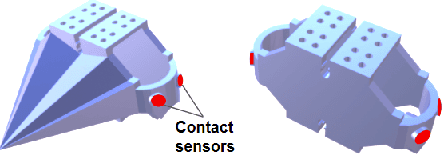

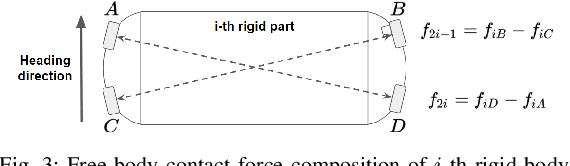

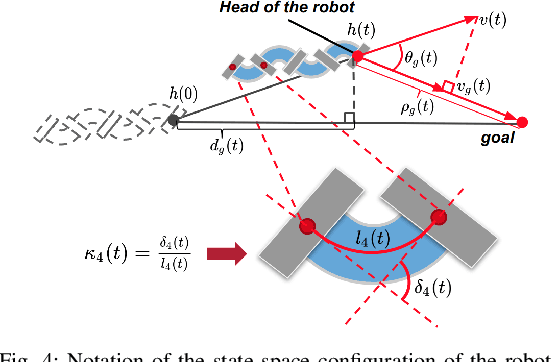

In this paper, we present a model-free learning-based control scheme for the soft snake robot to improve its contact-aware locomotion performance in a cluttered environment. The control scheme includes two cooperative controllers: A bio-inspired controller (C1) that controls both the steering and velocity of the soft snake robot, and an event-triggered regulator (R2) that controls the steering of the snake in anticipation of obstacle contacts and during contact. The inputs from the two controllers are composed as the input to a Matsuoka CPG network to generate smooth and rhythmic actuation inputs to the soft snake. To enable stable and efficient learning with two controllers, we develop a game-theoretic process, fictitious play, to train C1 and R2 with a shared potential-field-based reward function for goal tracking tasks. The proposed approach is tested and evaluated in the simulator and shows significant improvement of locomotion performance in the obstacle-based environment comparing to two baseline controllers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge