Learning Category-level Shape Saliency via Deep Implicit Surface Networks

Paper and Code

Dec 14, 2020

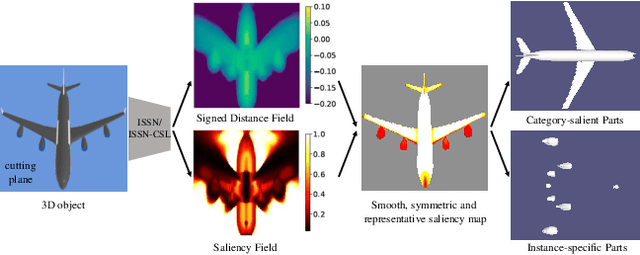

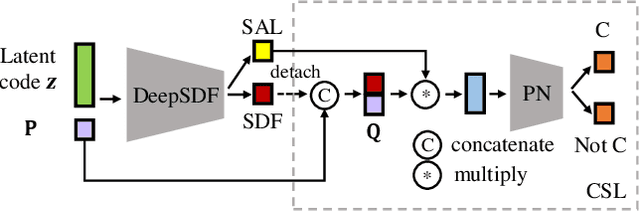

This paper is motivated from a fundamental curiosity on what defines a category of object shapes. For example, we may have the common knowledge that a plane has wings, and a chair has legs. Given the large shape variations among different instances of a same category, we are formally interested in developing a quantity defined for individual points on a continuous object surface; the quantity specifies how individual surface points contribute to the formation of the shape as the category. We term such a quantity as category-level shape saliency or shape saliency for short. Technically, we propose to learn saliency maps for shape instances of a same category from a deep implicit surface network; sensible saliency scores for sampled points in the implicit surface field are predicted by constraining the capacity of input latent code. We also enhance the saliency prediction with an additional loss of contrastive training. We expect such learned surface maps of shape saliency to have the properties of smoothness, symmetry, and semantic representativeness. We verify these properties by comparing our method with alternative ways of saliency computation. Notably, we show that by leveraging the learned shape saliency, we are able to reconstruct either category-salient or instance-specific parts of object surfaces; semantic representativeness of the learned saliency is also reflected in its efficacy to guide the selection of surface points for better point cloud classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge