Learning-Based Symbol Level Precoding: A Memory-Efficient Unsupervised Learning Approach

Paper and Code

Nov 15, 2021

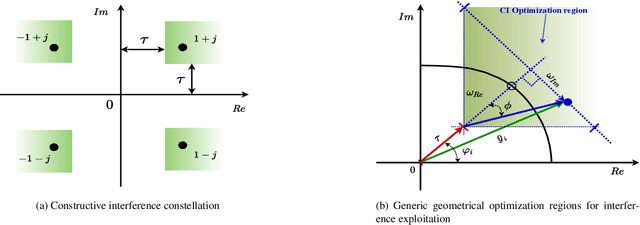

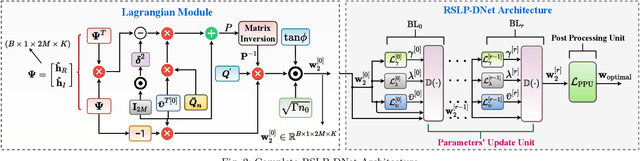

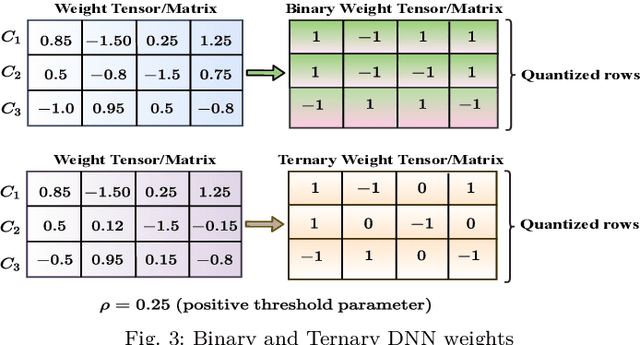

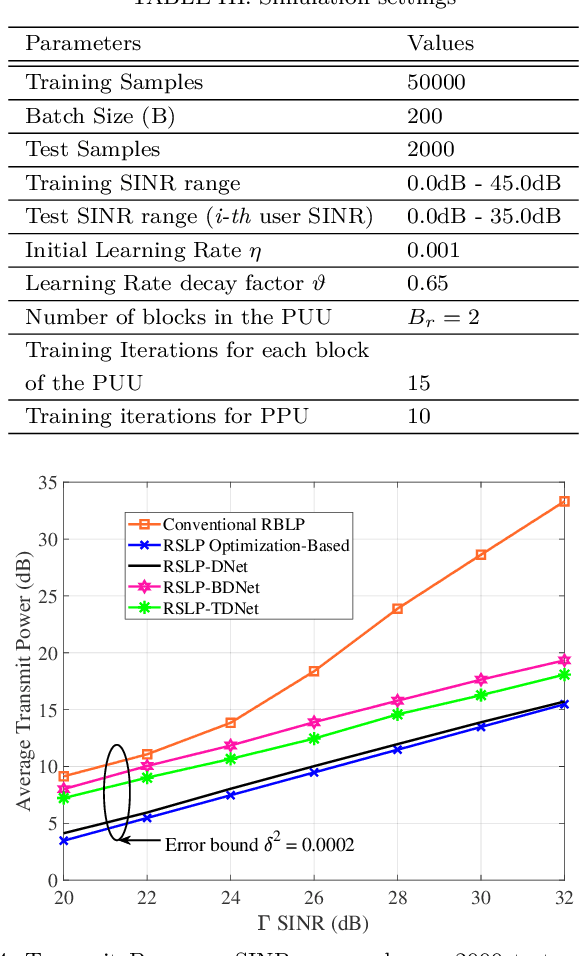

Symbol level precoding (SLP) has been proven to be an effective means of managing the interference in a multiuser downlink transmission and also enhancing the received signal power. This paper proposes an unsupervised learning based SLP that applies to quantized deep neural networks (DNNs). Rather than simply training a DNN in a supervised mode, our proposal unfolds a power minimization SLP formulation in an imperfect channel scenario using the interior point method (IPM) proximal `log' barrier function. We use binary and ternary quantizations to compress the DNN's weight values. The results show significant memory savings for our proposals compared to the existing full-precision SLP-DNet with significant model compression of ~21x and ~13x for both binary DNN-based SLP (RSLP-BDNet) and ternary DNN-based SLP (RSLP-TDNets), respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge