LDC-VAE: A Latent Distribution Consistency Approach to Variational AutoEncoders

Paper and Code

Sep 22, 2021

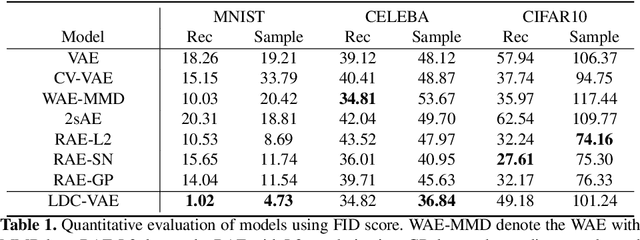

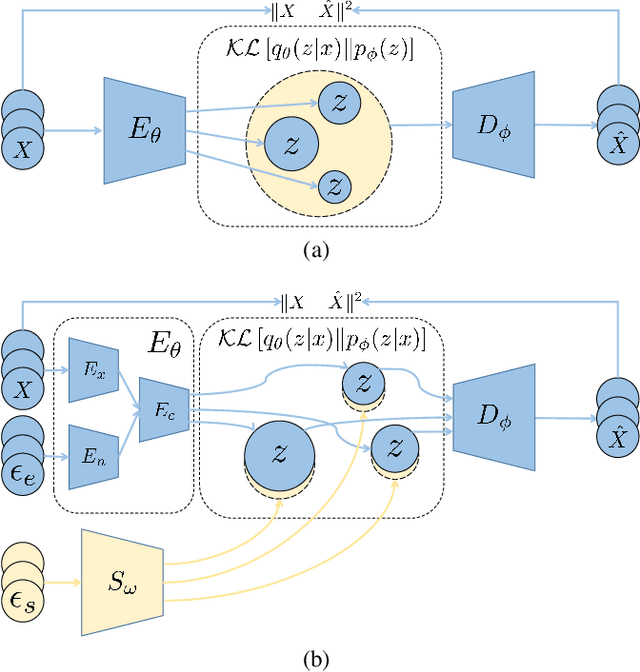

Variational autoencoders (VAEs), as an important aspect of generative models, have received a lot of research interests and reached many successful applications. However, it is always a challenge to achieve the consistency between the learned latent distribution and the prior latent distribution when optimizing the evidence lower bound (ELBO), and finally leads to an unsatisfactory performance in data generation. In this paper, we propose a latent distribution consistency approach to avoid such substantial inconsistency between the posterior and prior latent distributions in ELBO optimizing. We name our method as latent distribution consistency VAE (LDC-VAE). We achieve this purpose by assuming the real posterior distribution in latent space as a Gibbs form, and approximating it by using our encoder. However, there is no analytical solution for such Gibbs posterior in approximation, and traditional approximation ways are time consuming, such as using the iterative sampling-based MCMC. To address this problem, we use the Stein Variational Gradient Descent (SVGD) to approximate the Gibbs posterior. Meanwhile, we use the SVGD to train a sampler net which can obtain efficient samples from the Gibbs posterior. Comparative studies on the popular image generation datasets show that our method has achieved comparable or even better performance than several powerful improvements of VAEs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge