Kinematically-Informed Interactive Perception: Robot-Generated 3D Models for Classification

Paper and Code

Jan 17, 2019

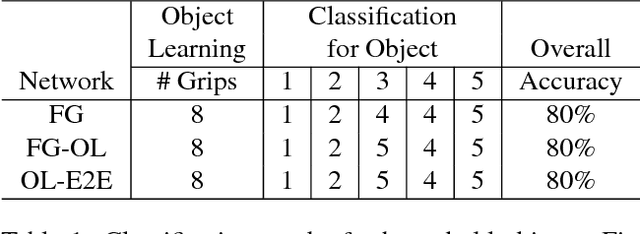

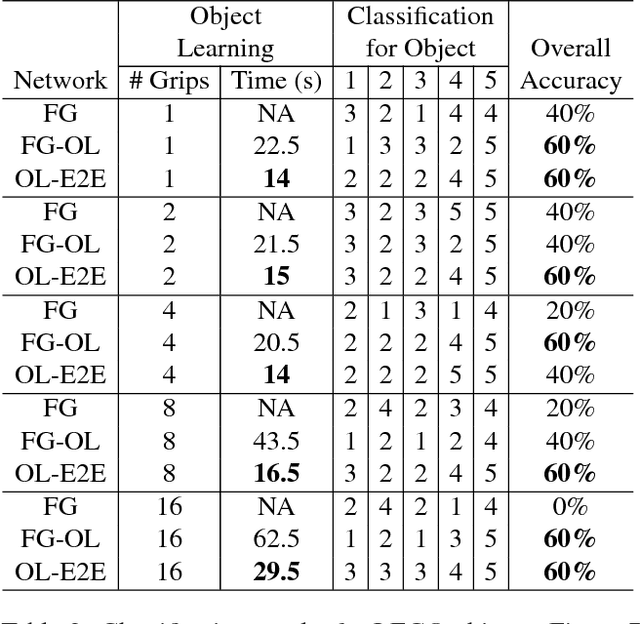

To be useful in everyday environments, robots must be able to observe and learn about objects. Recent datasets enable progress for classifying data into known object categories; however, it is unclear how to collect reliable object data when operating in cluttered, partially-observable environments. In this paper, we address the problem of building complete 3D models for real-world objects using a robot platform, which can remove objects from clutter for better classification. Furthermore, we are able to learn entirely new object categories as they are encountered, enabling the robot to classify previously unidentifiable objects during future interactions. We build models of grasped objects using simultaneous manipulation and observation, and we guide the processing of visual data using a kinematic description of the robot to combine observations from different view-points and remove background noise. To test our framework, we use a mobile manipulation robot equipped with an RGBD camera to build voxelized representations of unknown objects and then classify them into new categories. We then have the robot remove objects from clutter to manipulate, observe, and classify them in real-time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge