Keyphrase Generation Beyond the Boundaries of Title and Abstract

Paper and Code

Dec 13, 2021

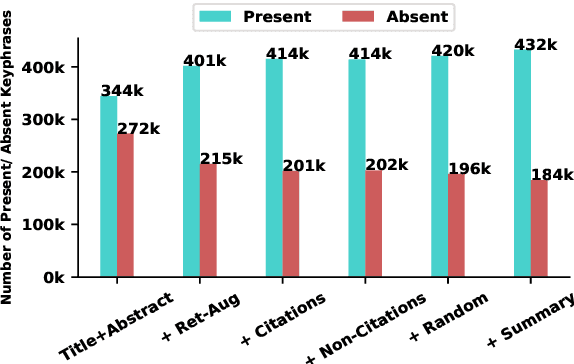

Keyphrase generation aims at generating phrases (keyphrases) that best describe a given document. In scholarly domains, current approaches to this task are neural approaches and have largely worked with only the title and abstract of the articles. In this work, we explore whether the integration of additional data from semantically similar articles or from the full text of the given article can be helpful for a neural keyphrase generation model. We discover that adding sentences from the full text particularly in the form of summary of the article can significantly improve the generation of both types of keyphrases that are either present or absent from the title and abstract. The experimental results on the three acclaimed models along with one of the latest transformer models suitable for longer documents, Longformer Encoder-Decoder (LED) validate the observation. We also present a new large-scale scholarly dataset FullTextKP for keyphrase generation, which we use for our experiments. Unlike prior large-scale datasets, FullTextKP includes the full text of the articles alongside title and abstract. We will release the source code to stimulate research on the proposed ideas.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge