K-nearest Multi-agent Deep Reinforcement Learning for Collaborative Tasks with a Variable Number of Agents

Paper and Code

Jan 18, 2022

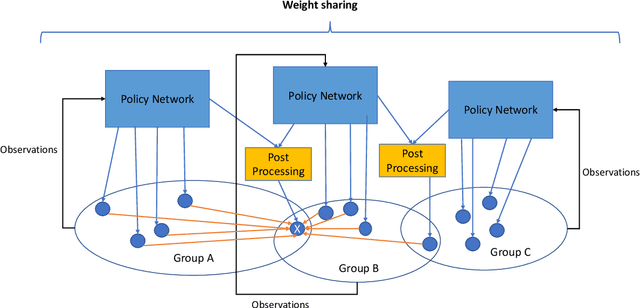

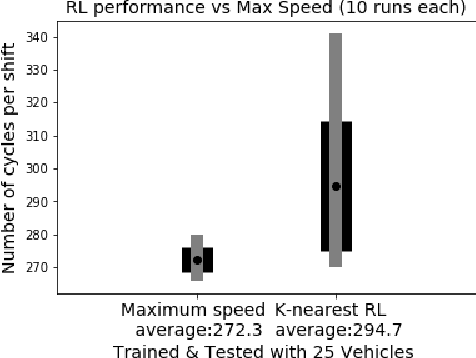

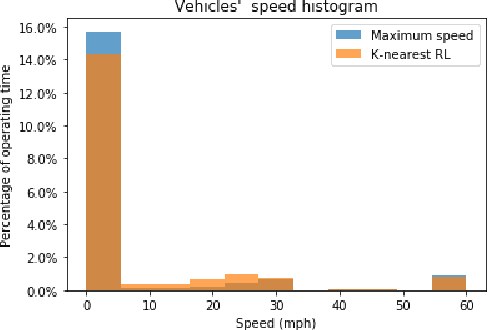

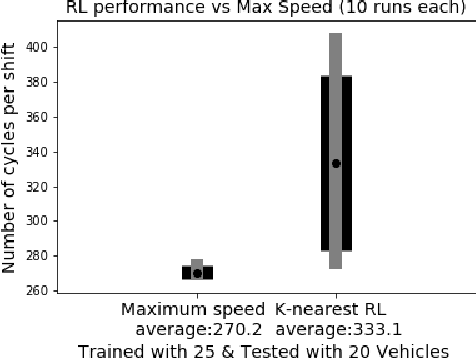

Traditionally, the performance of multi-agent deep reinforcement learning algorithms are demonstrated and validated in gaming environments where we often have a fixed number of agents. In many industrial applications, the number of available agents can change at any given day and even when the number of agents is known ahead of time, it is common for an agent to break during the operation and become unavailable for a period of time. In this paper, we propose a new deep reinforcement learning algorithm for multi-agent collaborative tasks with a variable number of agents. We demonstrate the application of our algorithm using a fleet management simulator developed by Hitachi to generate realistic scenarios in a production site.

* 2021 IEEE International Conference on Big Data (Big Data)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge