Investigating Value of Curriculum Reinforcement Learning in Autonomous Driving Under Diverse Road and Weather Conditions

Paper and Code

Mar 14, 2021

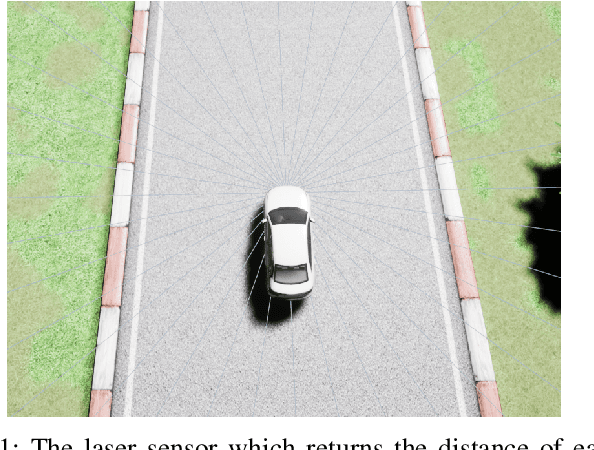

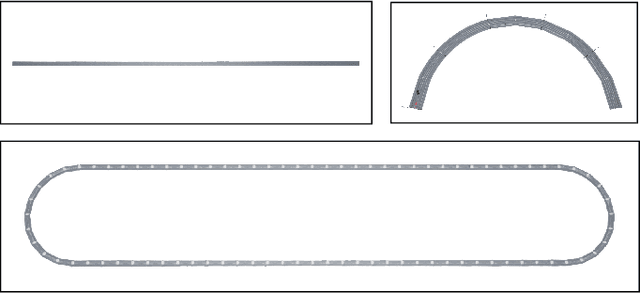

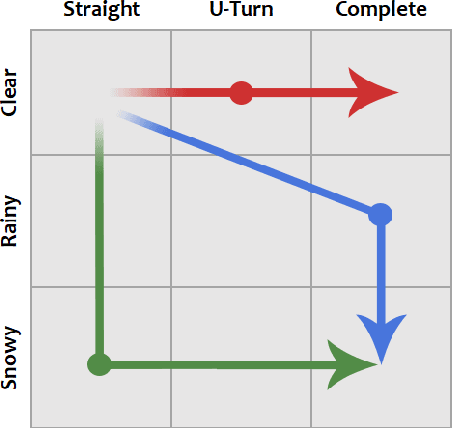

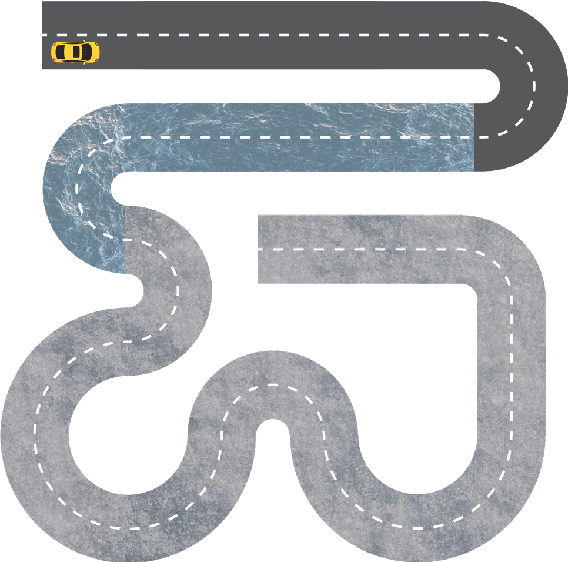

Applications of reinforcement learning (RL) are popular in autonomous driving tasks. That being said, tuning the performance of an RL agent and guaranteeing the generalization performance across variety of different driving scenarios is still largely an open problem. In particular, getting good performance on complex road and weather conditions require exhaustive tuning and computation time. Curriculum RL, which focuses on solving simpler automation tasks in order to transfer knowledge to complex tasks, is attracting attention in RL community. The main contribution of this paper is a systematic study for investigating the value of curriculum reinforcement learning in autonomous driving applications. For this purpose, we setup several different driving scenarios in a realistic driving simulator, with varying road complexity and weather conditions. Next, we train and evaluate performance of RL agents on different sequences of task combinations and curricula. Results show that curriculum RL can yield significant gains in complex driving tasks, both in terms of driving performance and sample complexity. Results also demonstrate that different curricula might enable different benefits, which hints future research directions for automated curriculum training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge