Investigating Learning in Deep Neural Networks using Layer-Wise Weight Change

Paper and Code

Dec 01, 2020

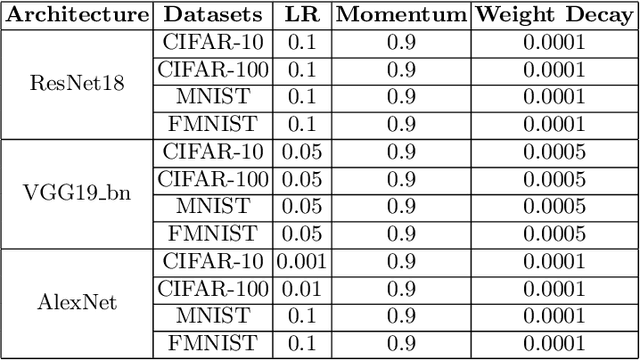

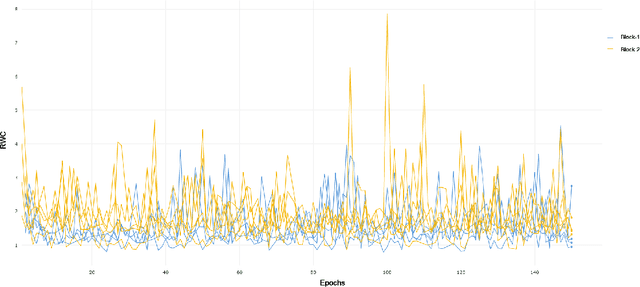

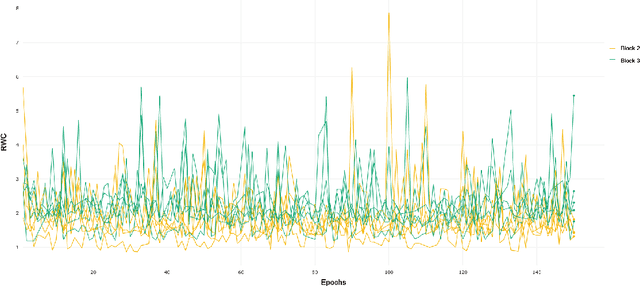

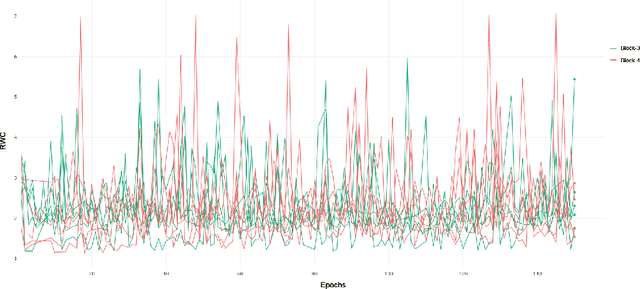

Understanding the per-layer learning dynamics of deep neural networks is of significant interest as it may provide insights into how neural networks learn and the potential for better training regimens. We investigate learning in Deep Convolutional Neural Networks (CNNs) by measuring the relative weight change of layers while training. Several interesting trends emerge in a variety of CNN architectures across various computer vision classification tasks, including the overall increase in relative weight change of later layers as compared to earlier ones.

* 14 pages, 20 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge