Intuitive Shape Editing in Latent Space

Paper and Code

Nov 24, 2021

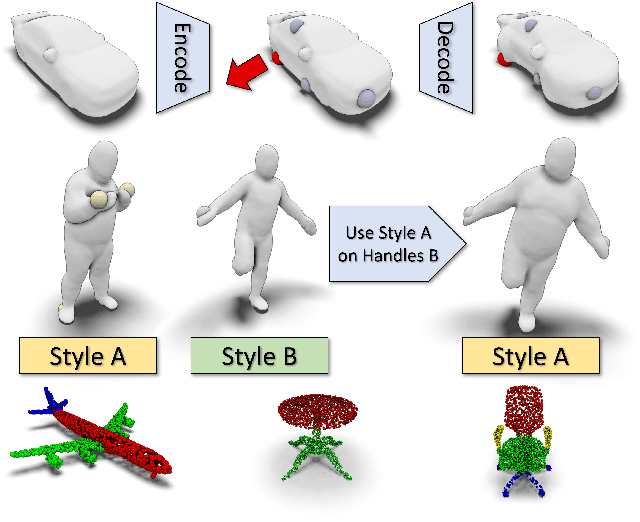

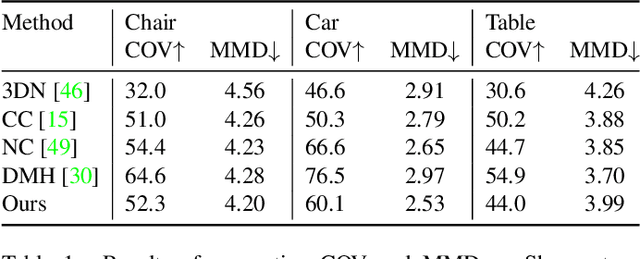

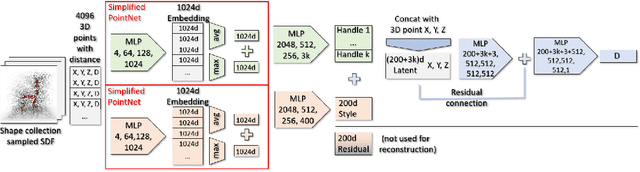

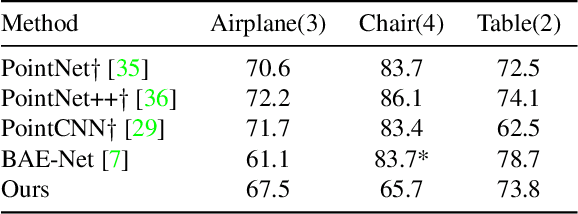

The use of autoencoders for shape generation and editing suffers from manipulations in latent space that may lead to unpredictable changes in the output shape. We present an autoencoder-based method that enables intuitive shape editing in latent space by disentangling latent sub-spaces to obtain control points on the surface and style variables that can be manipulated independently. The key idea is adding a Lipschitz-type constraint to the loss function, i.e. bounding the change of the output shape proportionally to the change in latent space, leading to interpretable latent space representations. The control points on the surface can then be freely moved around, allowing for intuitive shape editing directly in latent space. We evaluate our method by comparing it to state-of-the-art data-driven shape editing methods. Besides shape manipulation, we demonstrate the expressiveness of our control points by leveraging them for unsupervised part segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge