Intrusion Detection for Industrial Control Systems: Evaluation Analysis and Adversarial Attacks

Paper and Code

Nov 08, 2019

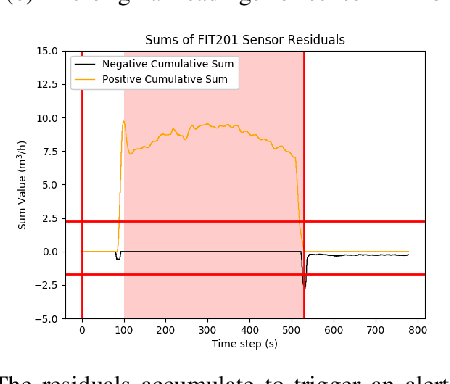

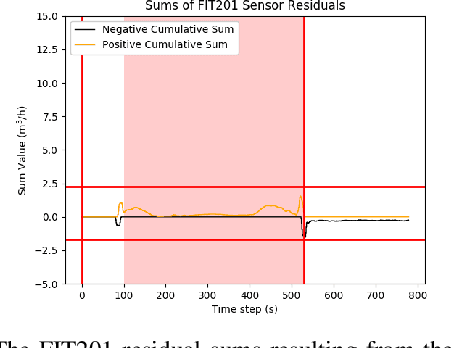

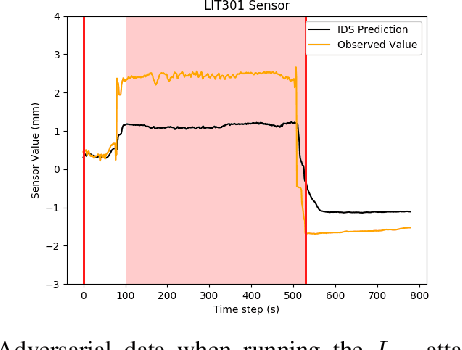

Neural networks are increasingly used in security applications for intrusion detection on industrial control systems. In this work we examine two areas that must be considered for their effective use. Firstly, is their vulnerability to adversarial attacks when used in a time series setting. Secondly, is potential over-estimation of performance arising from data leakage artefacts. To investigate these areas we implement a long short-term memory (LSTM) based intrusion detection system (IDS) which effectively detects cyber-physical attacks on a water treatment testbed representing a strong baseline IDS. For investigating adversarial attacks we model two different white box attackers. The first attacker is able to manipulate sensor readings on a subset of the Secure Water Treatment (SWaT) system. By creating a stream of adversarial data the attacker is able to hide the cyber-physical attacks from the IDS. For the cyber-physical attacks which are detected by the IDS, the attacker required on average 2.48 out of 12 total sensors to be compromised for the cyber-physical attacks to be hidden from the IDS. The second attacker model we explore is an $L_{\infty}$ bounded attacker who can send fake readings to the IDS, but to remain imperceptible, limits their perturbations to the smallest $L_{\infty}$ value needed. Additionally, we examine data leakage problems arising from tuning for $F_1$ score on the whole SWaT attack set and propose a method to tune detection parameters that does not utilise any attack data. If attack after-effects are accounted for then our new parameter tuning method achieved an $F_1$ score of 0.811$\pm$0.0103.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge