Intervention Generative Adversarial Networks

Paper and Code

Aug 09, 2020

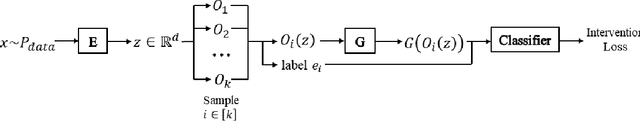

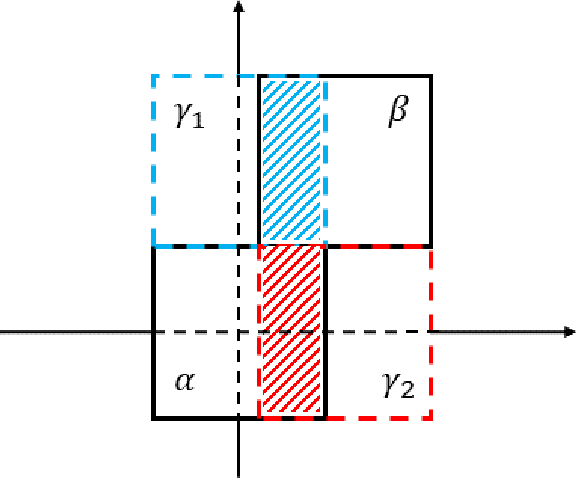

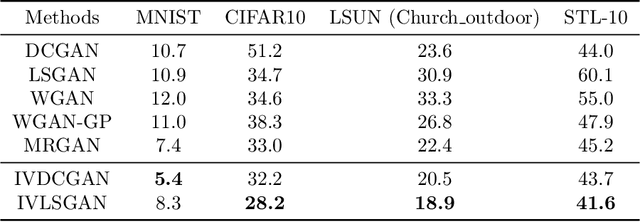

In this paper we propose a novel approach for stabilizing the training process of Generative Adversarial Networks as well as alleviating the mode collapse problem. The main idea is to introduce a regularization term that we call intervention loss into the objective. We refer to the resulting generative model as Intervention Generative Adversarial Networks (IVGAN). By perturbing the latent representations of real images obtained from an auxiliary encoder network with Gaussian invariant interventions and penalizing the dissimilarity of the distributions of the resulting generated images, the intervention loss provides more informative gradient for the generator, significantly improving GAN's training stability. We demonstrate the effectiveness and efficiency of our methods via solid theoretical analysis and thorough evaluation on standard real-world datasets as well as the stacked MNIST dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge