Interpretable Small Training Set Image Segmentation Network Originated from Multi-Grid Variational Model

Paper and Code

Jun 25, 2023

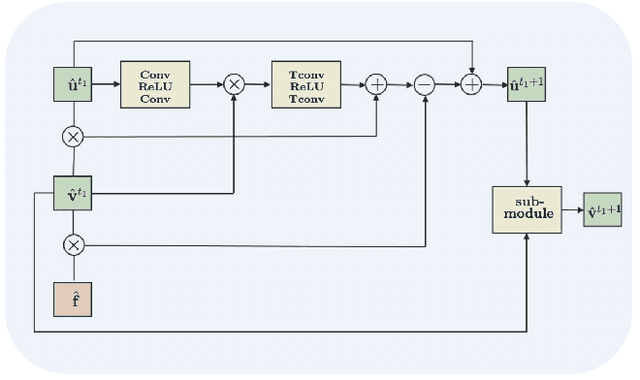

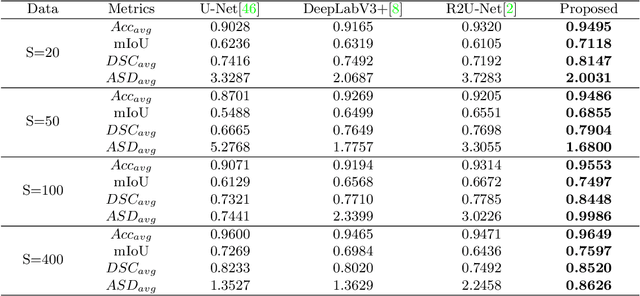

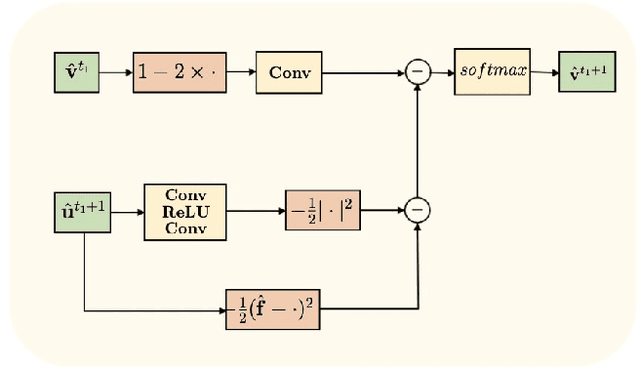

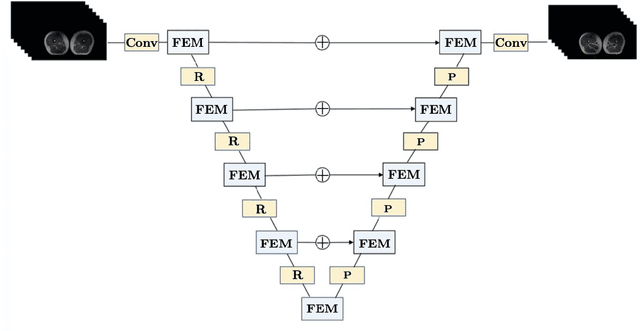

The main objective of image segmentation is to divide an image into homogeneous regions for further analysis. This is a significant and crucial task in many applications such as medical imaging. Deep learning (DL) methods have been proposed and widely used for image segmentation. However, these methods usually require a large amount of manually segmented data as training data and suffer from poor interpretability (known as the black box problem). The classical Mumford-Shah (MS) model is effective for segmentation and provides a piece-wise smooth approximation of the original image. In this paper, we replace the hand-crafted regularity term in the MS model with a data adaptive generalized learnable regularity term and use a multi-grid framework to unroll the MS model and obtain a variational model-based segmentation network with better generalizability and interpretability. This approach allows for the incorporation of learnable prior information into the network structure design. Moreover, the multi-grid framework enables multi-scale feature extraction and offers a mathematical explanation for the effectiveness of the U-shaped network structure in producing good image segmentation results. Due to the proposed network originates from a variational model, it can also handle small training sizes. Our experiments on the REFUGE dataset, the White Blood Cell image dataset, and 3D thigh muscle magnetic resonance (MR) images demonstrate that even with smaller training datasets, our method yields better segmentation results compared to related state of the art segmentation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge