Interpretable Embeddings for Segmentation-Free Single-Cell Analysis in Multiplex Imaging

Paper and Code

Nov 02, 2024

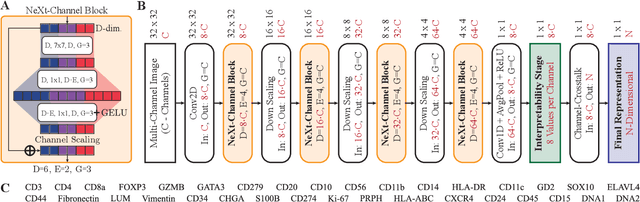

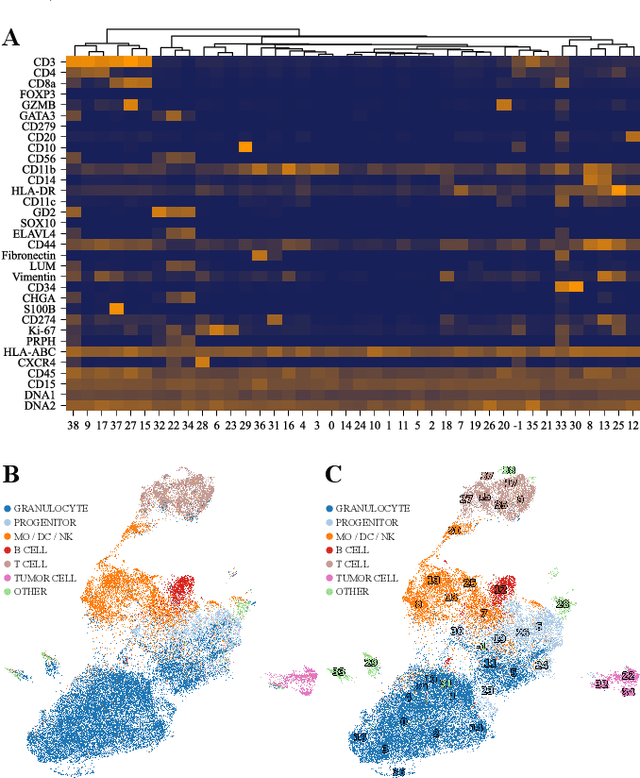

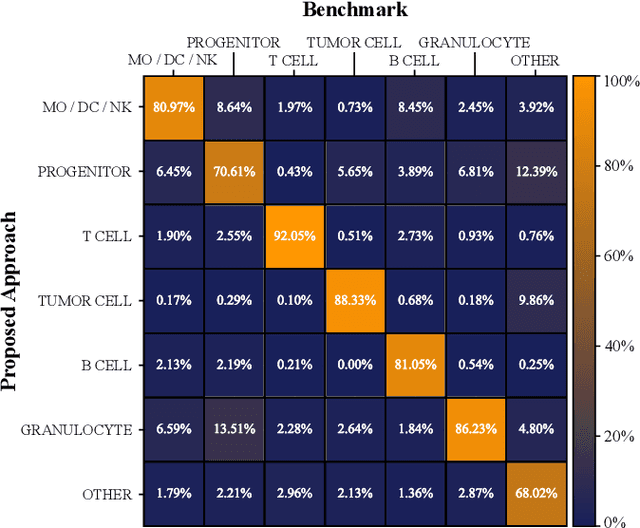

Multiplex Imaging (MI) enables the simultaneous visualization of multiple biological markers in separate imaging channels at subcellular resolution, providing valuable insights into cell-type heterogeneity and spatial organization. However, current computational pipelines rely on cell segmentation algorithms, which require laborious fine-tuning and can introduce downstream errors due to inaccurate single-cell representations. We propose a segmentation-free deep learning approach that leverages grouped convolutions to learn interpretable embedded features from each imaging channel, enabling robust cell-type identification without manual feature selection. Validated on an Imaging Mass Cytometry dataset of 1.8 million cells from neuroblastoma patients, our method enables the accurate identification of known cell types, showcasing its scalability and suitability for high-dimensional MI data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge