Interlock-Free Multi-Aspect Rationalization for Text Classification

Paper and Code

May 13, 2022

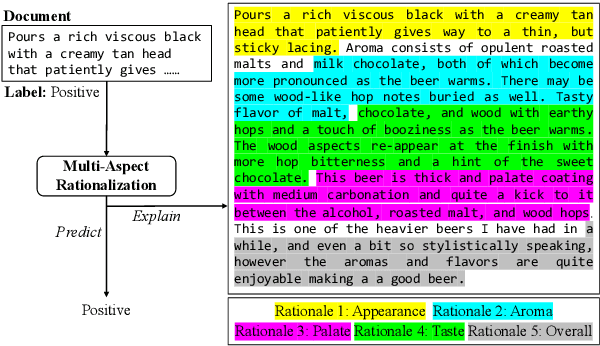

Explanation is important for text classification tasks. One prevalent type of explanation is rationales, which are text snippets of input text that suffice to yield the prediction and are meaningful to humans. A lot of research on rationalization has been based on the selective rationalization framework, which has recently been shown to be problematic due to the interlocking dynamics. In this paper, we show that we address the interlocking problem in the multi-aspect setting, where we aim to generate multiple rationales for multiple outputs. More specifically, we propose a multi-stage training method incorporating an additional self-supervised contrastive loss that helps to generate more semantically diverse rationales. Empirical results on the beer review dataset show that our method improves significantly the rationalization performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge