Instant Neural Representation for Interactive Volume Rendering

Paper and Code

Jul 23, 2022

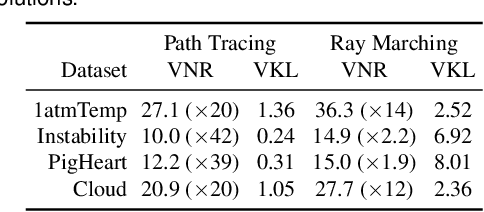

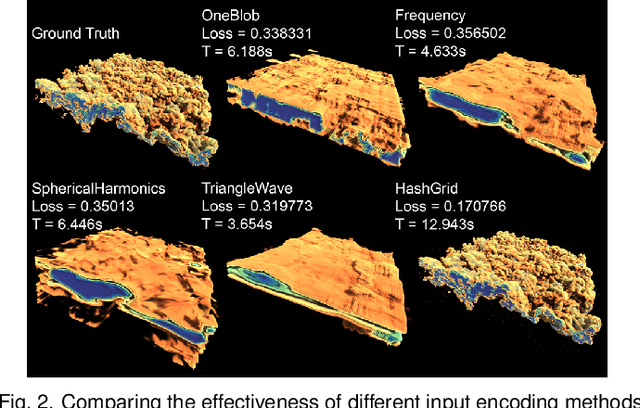

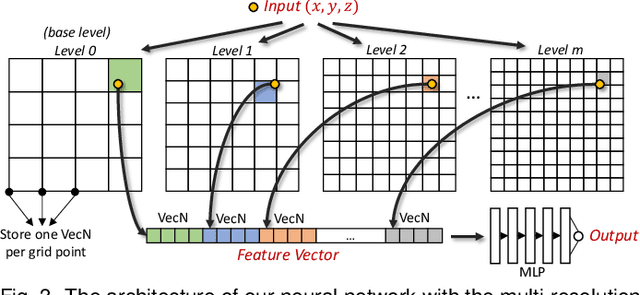

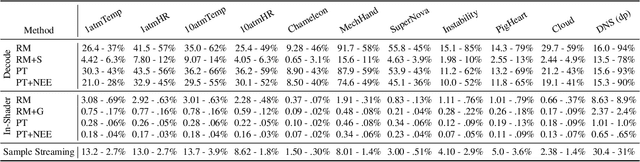

Neural networks have shown great potential in compressing volumetric data for scientific visualization. However, due to the high cost of training and inference, such volumetric neural representations have thus far only been applied to offline data processing and non-interactive rendering. In this paper, we demonstrate that by simultaneously leveraging modern GPU tensor cores, a native CUDA neural network framework, and online training, we can achieve high-performance and high-fidelity interactive ray tracing using volumetric neural representations. Additionally, our method is fully generalizable and can adapt to time-varying datasets on-the-fly. We present three strategies for online training with each leveraging a different combination of the GPU, the CPU, and out-of-core-streaming techniques. We also develop three rendering implementations that allow interactive ray tracing to be coupled with real-time volume decoding, sample streaming, and in-shader neural network inference. We demonstrate that our volumetric neural representations can scale up to terascale for regular-grid volume visualization, and can easily support irregular data structures such as OpenVDB, unstructured, AMR, and particle volume data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge