Improving Longer-range Dialogue State Tracking

Paper and Code

Feb 27, 2021

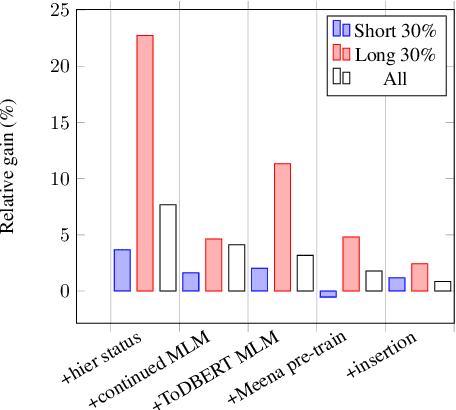

Dialogue state tracking (DST) is a pivotal component in task-oriented dialogue systems. While it is relatively easy for a DST model to capture belief states in short conversations, the task of DST becomes more challenging as the length of a dialogue increases due to the injection of more distracting contexts. In this paper, we aim to improve the overall performance of DST with a special focus on handling longer dialogues. We tackle this problem from three perspectives: 1) A model designed to enable hierarchical slot status prediction; 2) Balanced training procedure for generic and task-specific language understanding; 3) Data perturbation which enhances the model's ability in handling longer conversations. We conduct experiments on the MultiWOZ benchmark, and demonstrate the effectiveness of each component via a set of ablation tests, especially on longer conversations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge