Improving Lexical Embeddings for Robust Question Answering

Paper and Code

Feb 28, 2022

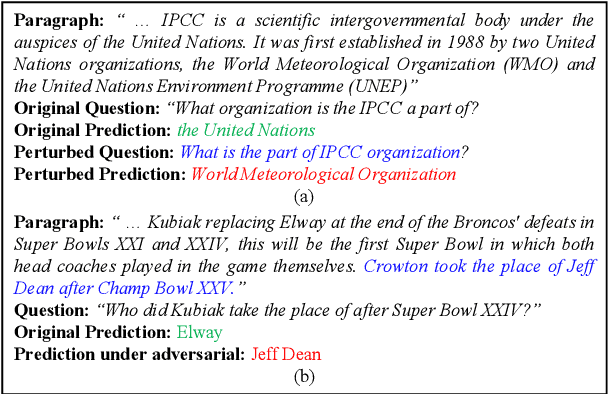

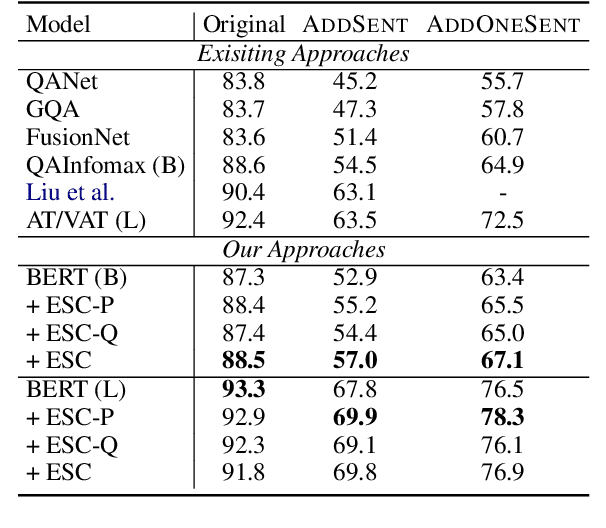

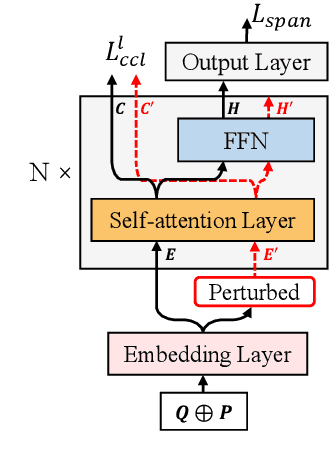

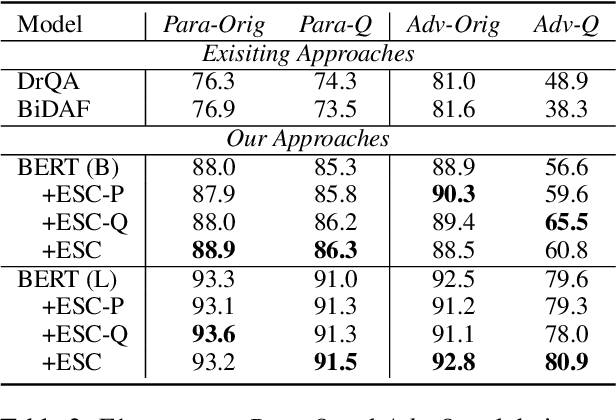

Recent techniques in Question Answering (QA) have gained remarkable performance improvement with some QA models even surpassed human performance. However, the ability of these models in truly understanding the language still remains dubious and the models are revealing limitations when facing adversarial examples. To strengthen the robustness of QA models and their generalization ability, we propose a representation Enhancement via Semantic and Context constraints (ESC) approach to improve the robustness of lexical embeddings. Specifically, we insert perturbations with semantic constraints and train enhanced contextual representations via a context-constraint loss to better distinguish the context clues for the correct answer. Experimental results show that our approach gains significant robustness improvement on four adversarial test sets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge