Improving both domain robustness and domain adaptability in machine translation

Paper and Code

Dec 15, 2021

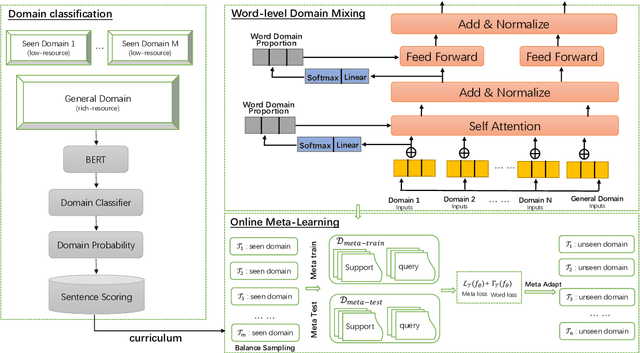

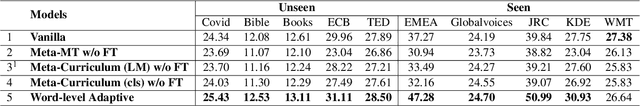

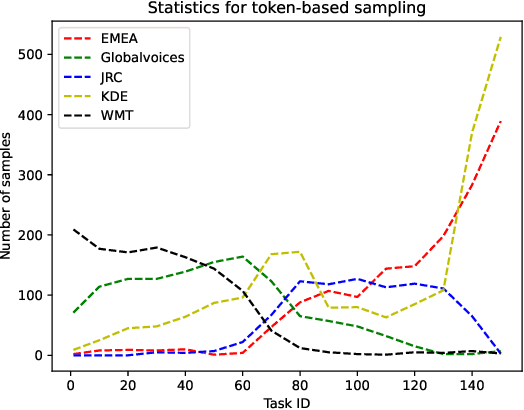

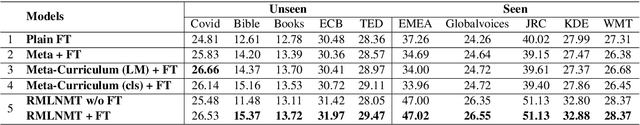

We address two problems of domain adaptation in neural machine translation. First, we want to reach domain robustness, i.e., good quality of both domains from the training data, and domains unseen in the training data. Second, we want our systems to be adaptive, i.e., making it possible to finetune systems with just hundreds of in-domain parallel sentences. In this paper, we introduce a novel combination of two previous approaches, word adaptive modelling, which addresses domain robustness, and meta-learning, which addresses domain adaptability, and we present empirical results showing that our new combination improves both of these properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge