Improved Optimistic Algorithm For The Multinomial Logit Contextual Bandit

Paper and Code

Nov 28, 2020

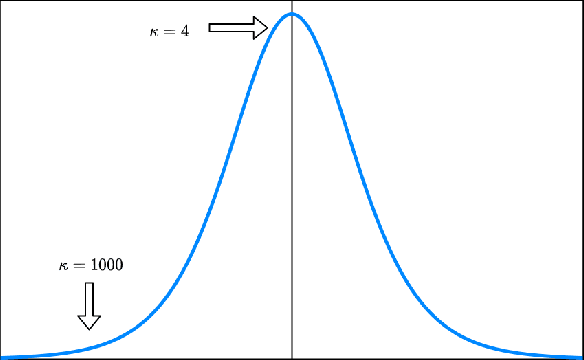

We consider a dynamic assortment selection problem where the goal is to offer a sequence of assortments of cardinality at most $K$, out of $N$ items, to minimize the expected cumulative regret (loss of revenue). The feedback is given by a multinomial logit (MNL) choice model. This sequential decision making problem is studied under the MNL contextual bandit framework. The existing algorithms for MNL contexual bandit have frequentist regret guarantees as $\tilde{\mathrm{O}}(\kappa\sqrt{T})$, where $\kappa$ is an instance dependent constant. $\kappa$ could be arbitrarily large, e.g. exponentially dependent on the model parameters, causing the existing regret guarantees to be substantially loose. We propose an optimistic algorithm with a carefully designed exploration bonus term and show that it enjoys $\tilde{\mathrm{O}}(\sqrt{T})$ regret. In our bounds, the $\kappa$ factor only affects the poly-log term and not the leading term of the regret bounds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge