Illumination adaptive person reid based on teacher-student model and adversarial training

Paper and Code

Feb 13, 2020

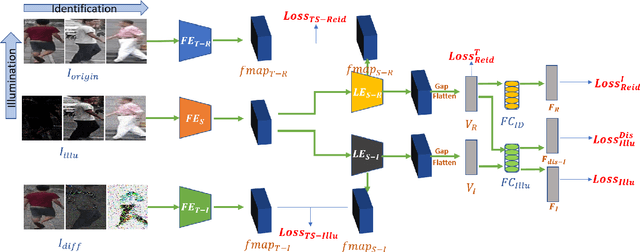

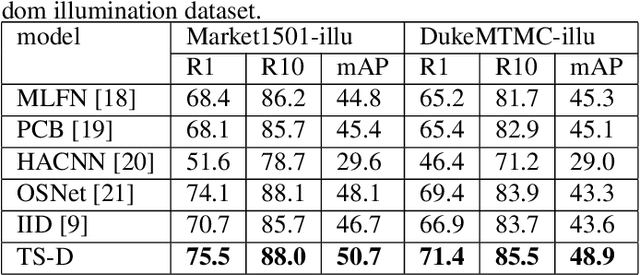

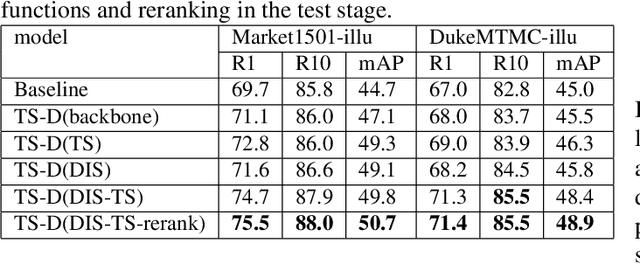

Most existing works in Person Re-identification (ReID) focus on settings where illumination either is kept the same or has very little fluctuation. However, the changes in the illumination degree may affect the robustness of a ReID algorithm significantly. To address this problem, we proposed a Two-Stream Network that can separate ReID features from lighting features to enhance ReID performance. Its innovations are threefold: (1) A discriminative entropy loss to ensure the ReID features contain no lighting information. (2) A ReID Teacher model trained by images under "neutral" lighting conditions to guide ReID classification. (3) An illumination Teacher model trained by the differences between the illumination-adjusted and original images to guide illumination classification. We construct two augmented datasets by synthetically changing a set of predefined lighting conditions in two of the most popular ReID benchmarks: Market1501 and DukeMTMC-ReID. Experiments demonstrate that our algorithm outperforms other state-of-the-art works and particularly potent in handling images under extremely low light.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge