HyperPINN: Learning parameterized differential equations with physics-informed hypernetworks

Paper and Code

Oct 28, 2021

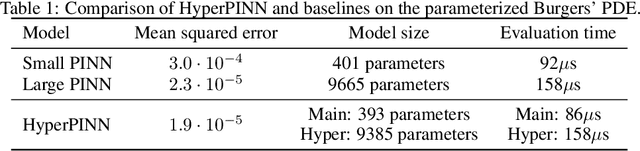

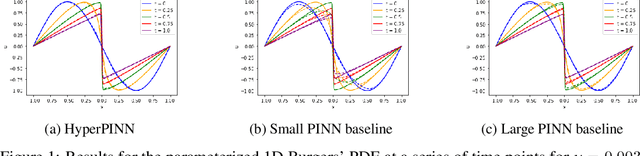

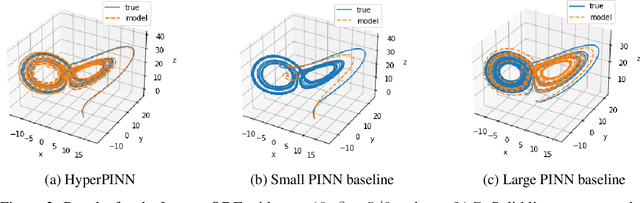

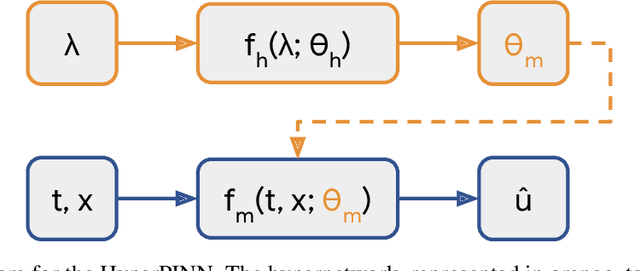

Many types of physics-informed neural network models have been proposed in recent years as approaches for learning solutions to differential equations. When a particular task requires solving a differential equation at multiple parameterizations, this requires either re-training the model, or expanding its representation capacity to include the parameterization -- both solution that increase its computational cost. We propose the HyperPINN, which uses hypernetworks to learn to generate neural networks that can solve a differential equation from a given parameterization. We demonstrate with experiments on both a PDE and an ODE that this type of model can lead to neural network solutions to differential equations that maintain a small size, even when learning a family of solutions over a parameter space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge