Human Observation-Inspired Trajectory Prediction for Autonomous Driving in Mixed-Autonomy Traffic Environments

Paper and Code

Feb 06, 2024

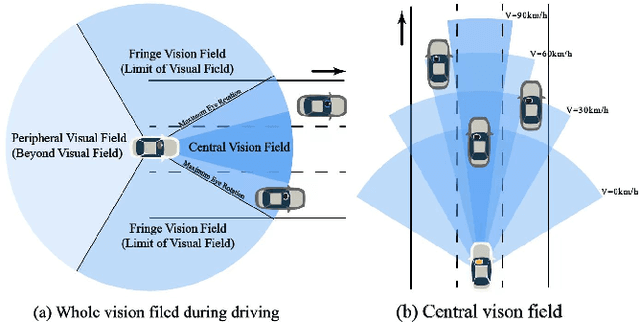

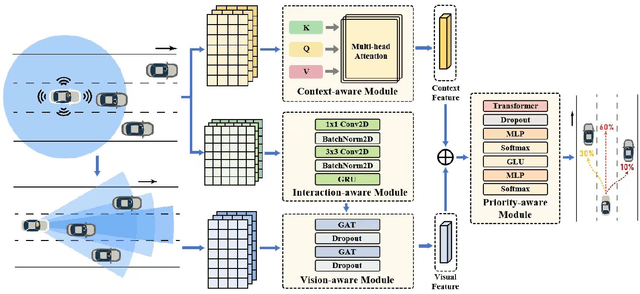

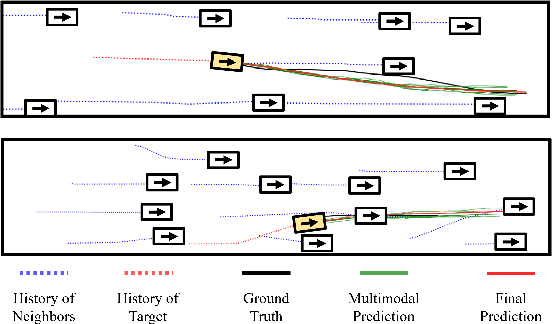

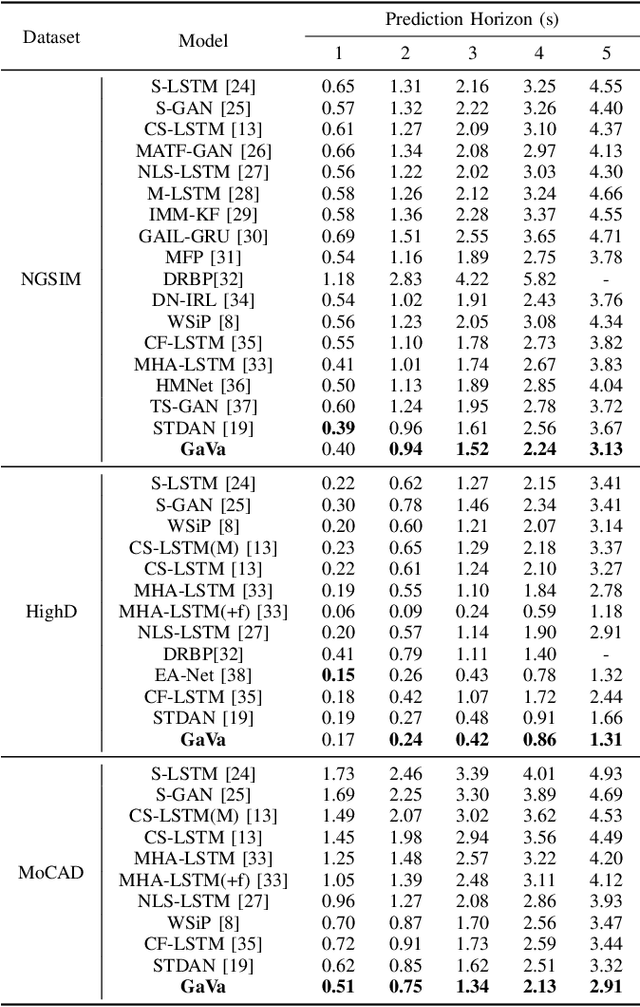

In the burgeoning field of autonomous vehicles (AVs), trajectory prediction remains a formidable challenge, especially in mixed autonomy environments. Traditional approaches often rely on computational methods such as time-series analysis. Our research diverges significantly by adopting an interdisciplinary approach that integrates principles of human cognition and observational behavior into trajectory prediction models for AVs. We introduce a novel "adaptive visual sector" mechanism that mimics the dynamic allocation of attention human drivers exhibit based on factors like spatial orientation, proximity, and driving speed. Additionally, we develop a "dynamic traffic graph" using Convolutional Neural Networks (CNN) and Graph Attention Networks (GAT) to capture spatio-temporal dependencies among agents. Benchmark tests on the NGSIM, HighD, and MoCAD datasets reveal that our model (GAVA) outperforms state-of-the-art baselines by at least 15.2%, 19.4%, and 12.0%, respectively. Our findings underscore the potential of leveraging human cognition principles to enhance the proficiency and adaptability of trajectory prediction algorithms in AVs. The code for the proposed model is available at our Github.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge