How Wrong Am I? - Studying Adversarial Examples and their Impact on Uncertainty in Gaussian Process Machine Learning Models

Paper and Code

Feb 16, 2018

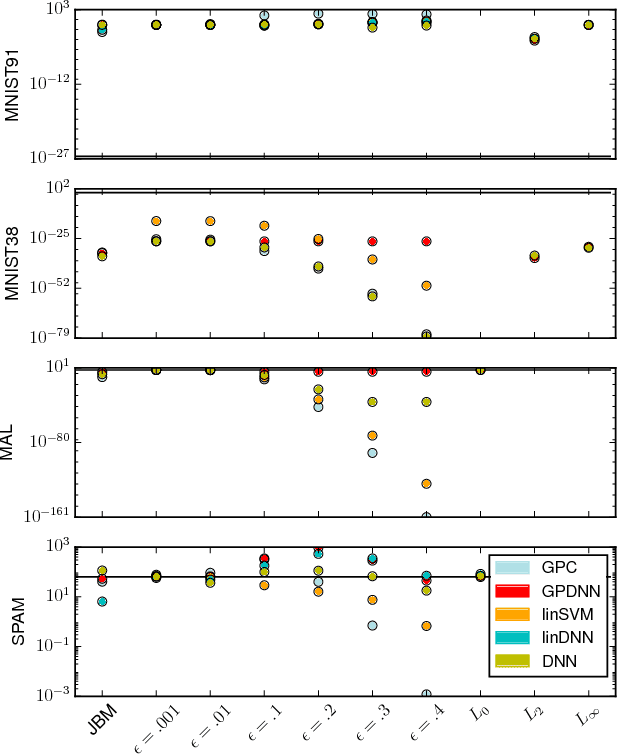

Machine learning models are vulnerable to Adversarial Examples: minor perturbations to input samples intended to deliberately cause misclassification. Current defenses against adversarial examples, especially for Deep Neural Networks (DNN), are primarily derived from empirical developments, and their security guarantees are often only justified retroactively. Many defenses therefore rely on hidden assumptions that are subsequently subverted by increasingly elaborate attacks. This is not surprising: deep learning notoriously lacks a comprehensive mathematical framework to provide meaningful guarantees. In this paper, we leverage Gaussian Processes to investigate adversarial examples in the framework of Bayesian inference. Across different models and datasets, we find deviating levels of uncertainty reflect the perturbation introduced to benign samples by state-of-the-art attacks, including novel white-box attacks on Gaussian Processes. Our experiments demonstrate that even unoptimized uncertainty thresholds already reject adversarial examples in many scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge