How Does Batch Normalization Help Binary Training?

Paper and Code

Oct 10, 2019

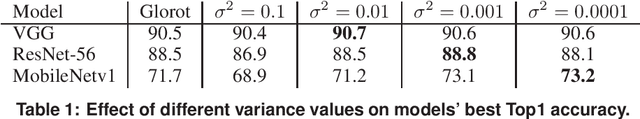

Binary Neural Networks (BNNs) are difficult to train, and suffer from drop of accuracy. It appears in practice that BNNs fail to train in the absence of Batch Normalization (BatchNorm) layer. We find the main role of BatchNorm is to avoid exploding gradients in the case of BNNs. This finding suggests that the common initialization methods developed for full-precision networks are irrelevant to BNNs. We build a theoretical study on the role of BatchNorm in binary training, backed up by numerical experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge