Hitting the Target: Stopping Active Learning at the Cost-Based Optimum

Paper and Code

Oct 07, 2021

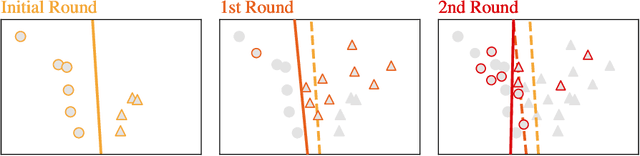

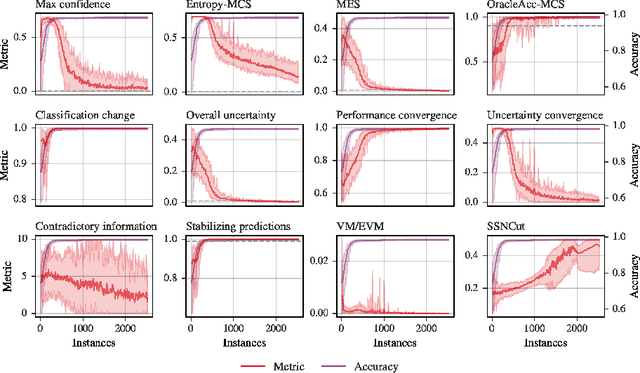

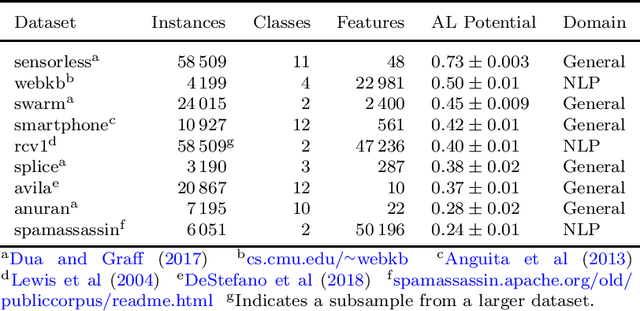

Active learning allows machine learning models to be trained using fewer labels while retaining similar performance to traditional fully supervised learning. An active learner selects the most informative data points, requests their labels, and retrains itself. While this approach is promising, it leaves an open problem of how to determine when the model is `good enough' without the additional labels required for traditional evaluation. In the past, different stopping criteria have been proposed aiming to identify the optimal stopping point. However, optimality can only be expressed as a domain-dependent trade-off between accuracy and the number of labels, and no criterion is superior in all applications. This paper is the first to give actionable advice to practitioners on what stopping criteria they should use in a given real-world scenario. We contribute the first large-scale comparison of stopping criteria, using a cost measure to quantify the accuracy/label trade-off, public implementations of all stopping criteria we evaluate, and an open-source framework for evaluating stopping criteria. Our research enables practitioners to substantially reduce labelling costs by utilizing the stopping criterion which best suits their domain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge