HIRE: Distilling High-order Relational Knowledge From Heterogeneous Graph Neural Networks

Paper and Code

Jul 25, 2022

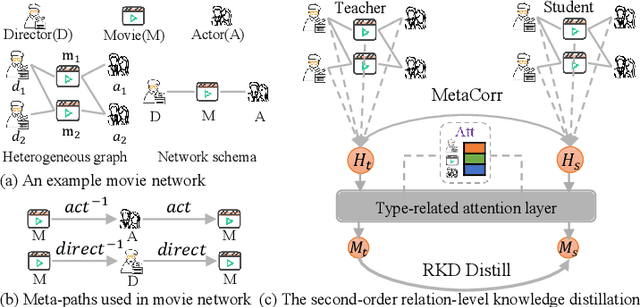

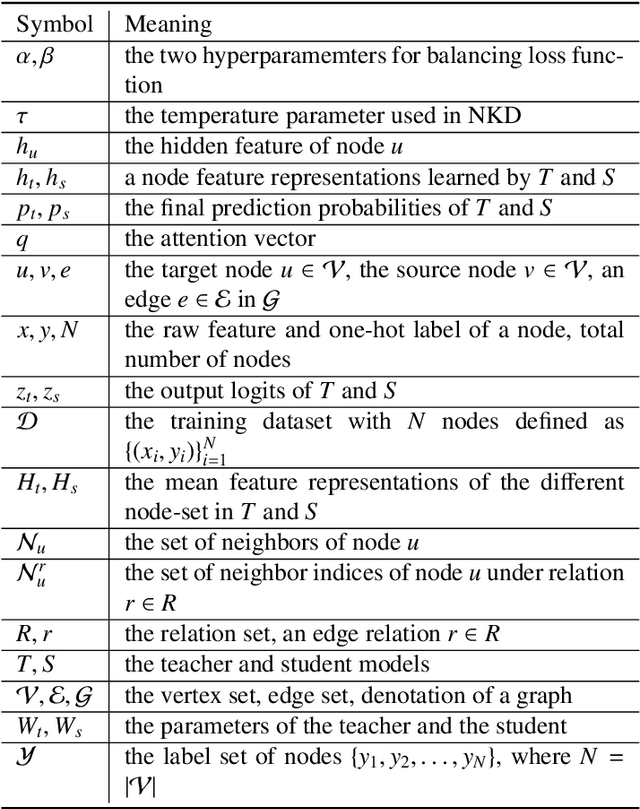

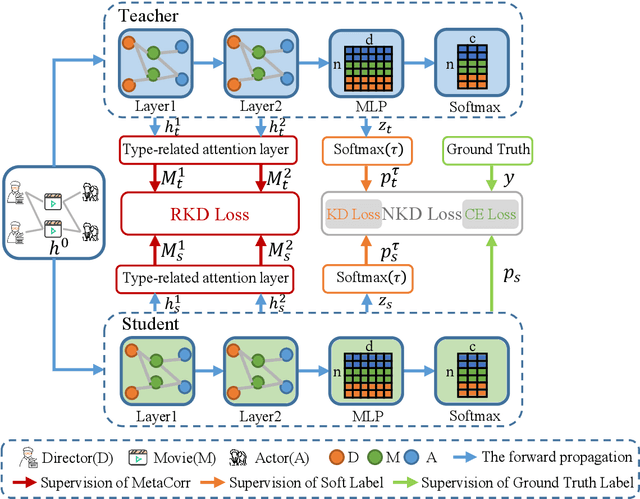

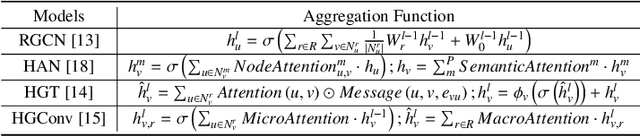

Researchers have recently proposed plenty of heterogeneous graph neural networks (HGNNs) due to the ubiquity of heterogeneous graphs in both academic and industrial areas. Instead of pursuing a more powerful HGNN model, in this paper, we are interested in devising a versatile plug-and-play module, which accounts for distilling relational knowledge from pre-trained HGNNs. To the best of our knowledge, we are the first to propose a HIgh-order RElational (HIRE) knowledge distillation framework on heterogeneous graphs, which can significantly boost the prediction performance regardless of model architectures of HGNNs. Concretely, our HIRE framework initially performs first-order node-level knowledge distillation, which encodes the semantics of the teacher HGNN with its prediction logits. Meanwhile, the second-order relation-level knowledge distillation imitates the relational correlation between node embeddings of different types generated by the teacher HGNN. Extensive experiments on various popular HGNNs models and three real-world heterogeneous graphs demonstrate that our method obtains consistent and considerable performance enhancement, proving its effectiveness and generalization ability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge