High-Throughput In-Memory Computing for Binary Deep Neural Networks with Monolithically Integrated RRAM and 90nm CMOS

Paper and Code

Sep 16, 2019

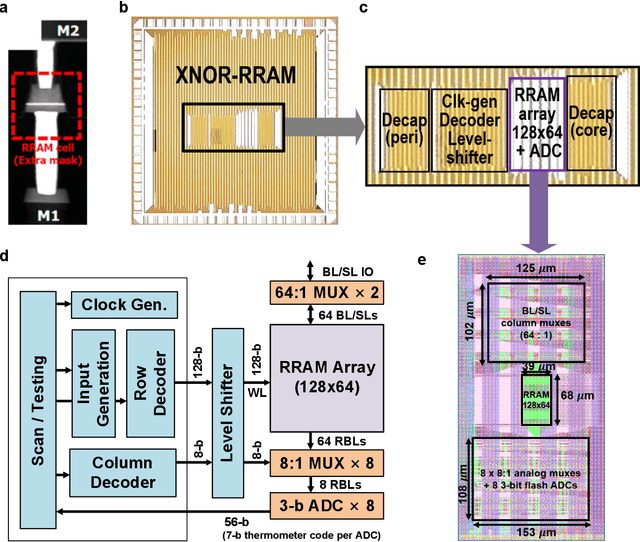

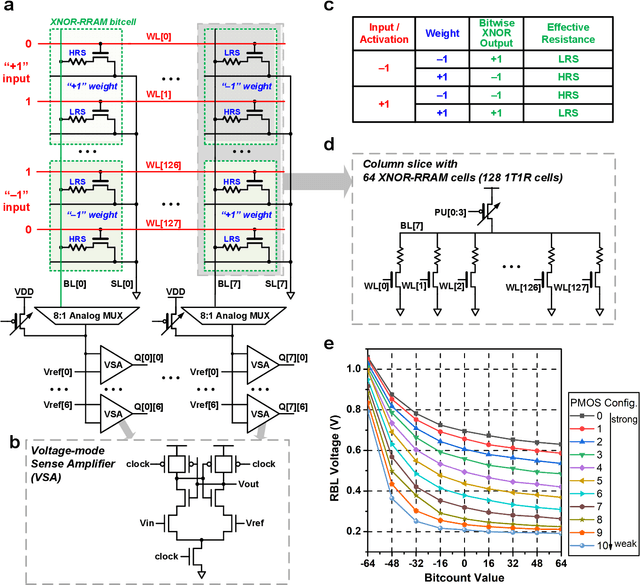

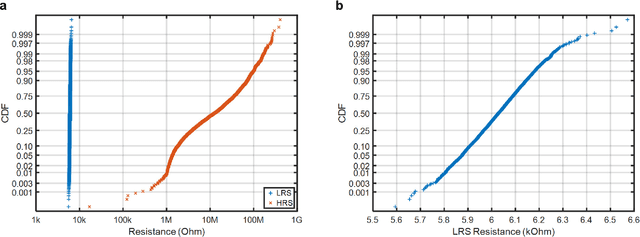

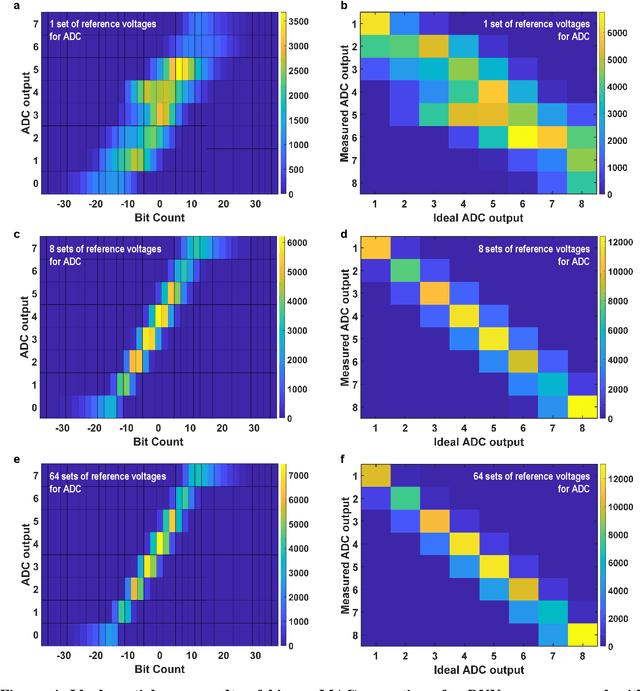

Deep learning hardware designs have been bottlenecked by conventional memories such as SRAM due to density, leakage and parallel computing challenges. Resistive devices can address the density and volatility issues, but have been limited by peripheral circuit integration. In this work, we demonstrate a scalable RRAM based in-memory computing design, termed XNOR-RRAM, which is fabricated in a 90nm CMOS technology with monolithic integration of RRAM devices between metal 1 and 2. We integrated a 128x64 RRAM array with CMOS peripheral circuits including row/column decoders and flash analog-to-digital converters (ADCs), which collectively become a core component for scalable RRAM-based in-memory computing towards large deep neural networks (DNNs). To maximize the parallelism of in-memory computing, we assert all 128 wordlines of the RRAM array simultaneously, perform analog computing along the bitlines, and digitize the bitline voltages using ADCs. The resistance distribution of low resistance states is tightened by write-verify scheme, and the ADC offset is calibrated. Prototype chip measurements show that the proposed design achieves high binary DNN accuracy of 98.5% for MNIST and 83.5% for CIFAR-10 datasets, respectively, with energy efficiency of 24 TOPS/W and 158 GOPS throughput. This represents 5.6X, 3.2X, 14.1X improvements in throughput, energy-delay product (EDP), and energy-delay-squared product (ED2P), respectively, compared to the state-of-the-art literature. The proposed XNOR-RRAM can enable intelligent functionalities for area-/energy-constrained edge computing devices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge