Hardness-Aware Scene Synthesis for Semi-Supervised 3D Object Detection

Paper and Code

May 27, 2024

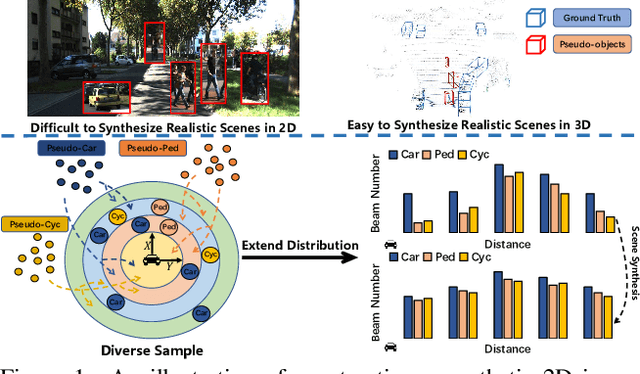

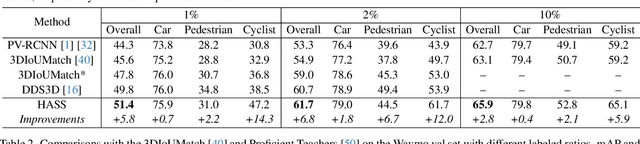

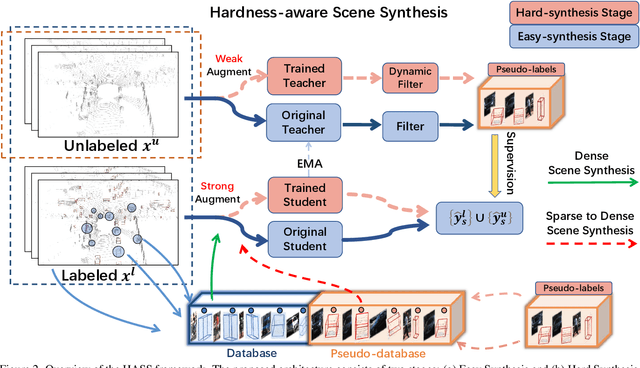

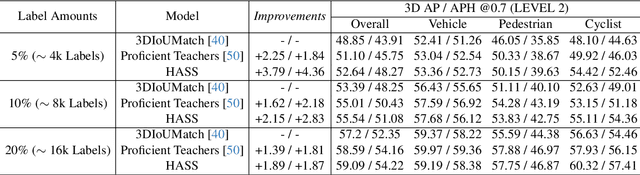

3D object detection aims to recover the 3D information of concerning objects and serves as the fundamental task of autonomous driving perception. Its performance greatly depends on the scale of labeled training data, yet it is costly to obtain high-quality annotations for point cloud data. While conventional methods focus on generating pseudo-labels for unlabeled samples as supplements for training, the structural nature of 3D point cloud data facilitates the composition of objects and backgrounds to synthesize realistic scenes. Motivated by this, we propose a hardness-aware scene synthesis (HASS) method to generate adaptive synthetic scenes to improve the generalization of the detection models. We obtain pseudo-labels for unlabeled objects and generate diverse scenes with different compositions of objects and backgrounds. As the scene synthesis is sensitive to the quality of pseudo-labels, we further propose a hardness-aware strategy to reduce the effect of low-quality pseudo-labels and maintain a dynamic pseudo-database to ensure the diversity and quality of synthetic scenes. Extensive experimental results on the widely used KITTI and Waymo datasets demonstrate the superiority of the proposed HASS method, which outperforms existing semi-supervised learning methods on 3D object detection. Code: https://github.com/wzzheng/HASS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge