Group-CAM: Group Score-Weighted Visual Explanations for Deep Convolutional Networks

Paper and Code

Mar 26, 2021

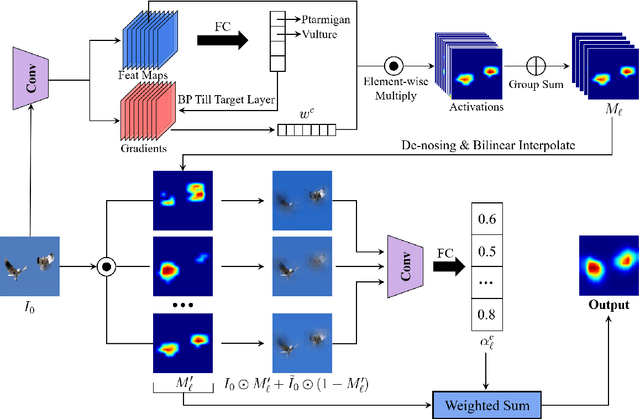

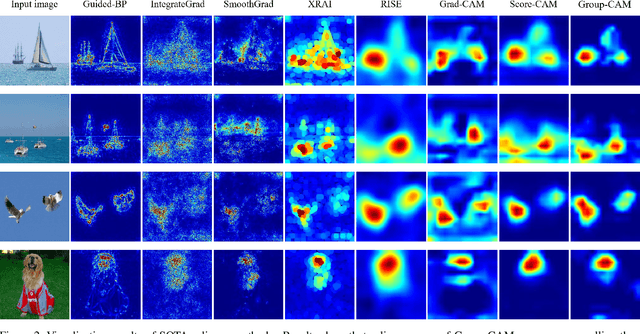

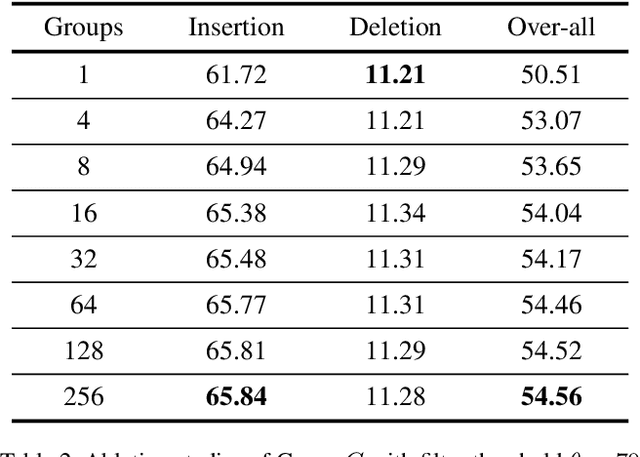

In this paper, we propose an efficient saliency map generation method, called Group score-weighted Class Activation Mapping (Group-CAM), which adopts the "split-transform-merge" strategy to generate saliency maps. Specifically, for an input image, the class activations are firstly split into groups. In each group, the sub-activations are summed and de-noised as an initial mask. After that, the initial masks are transformed with meaningful perturbations and then applied to preserve sub-pixels of the input (i.e., masked inputs), which are then fed into the network to calculate the confidence scores. Finally, the initial masks are weighted summed to form the final saliency map, where the weights are confidence scores produced by the masked inputs. Group-CAM is efficient yet effective, which only requires dozens of queries to the network while producing target-related saliency maps. As a result, Group-CAM can be served as an effective data augment trick for fine-tuning the networks. We comprehensively evaluate the performance of Group-CAM on common-used benchmarks, including deletion and insertion tests on ImageNet-1k, and pointing game tests on COCO2017. Extensive experimental results demonstrate that Group-CAM achieves better visual performance than the current state-of-the-art explanation approaches. The code is available at https://github.com/wofmanaf/Group-CAM.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge