Gradient-based Fuzzy System Optimisation via Automatic Differentiation -- FuzzyR as a Use Case

Paper and Code

Mar 18, 2024

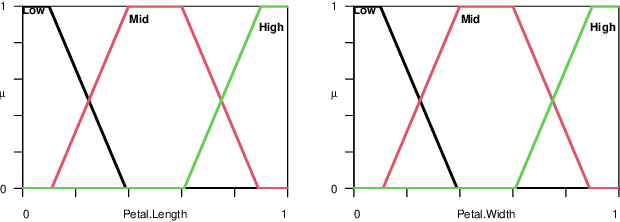

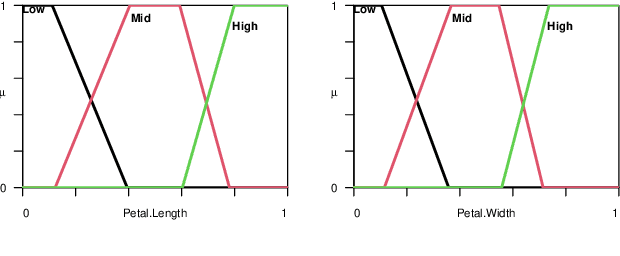

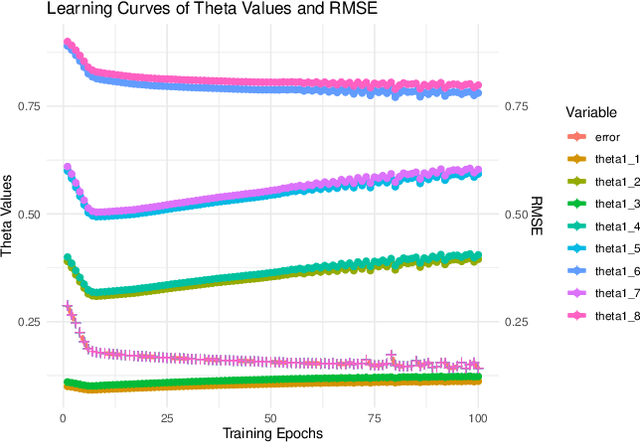

Since their introduction, fuzzy sets and systems have become an important area of research known for its versatility in modelling, knowledge representation and reasoning, and increasingly its potential within the context explainable AI. While the applications of fuzzy systems are diverse, there has been comparatively little advancement in their design from a machine learning perspective. In other words, while representations such as neural networks have benefited from a boom in learning capability driven by an increase in computational performance in combination with advances in their training mechanisms and available tool, in particular gradient descent, the impact on fuzzy system design has been limited. In this paper, we discuss gradient-descent-based optimisation of fuzzy systems, focussing in particular on automatic differentiation -- crucial to neural network learning -- with a view to free fuzzy system designers from intricate derivative computations, allowing for more focus on the functional and explainability aspects of their design. As a starting point, we present a use case in FuzzyR which demonstrates how current fuzzy inference system implementations can be adjusted to leverage powerful features of automatic differentiation tools sets, discussing its potential for the future of fuzzy system design.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge