Global Locality in Event Extraction

Paper and Code

Sep 11, 2019

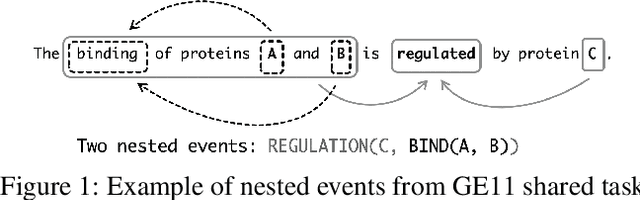

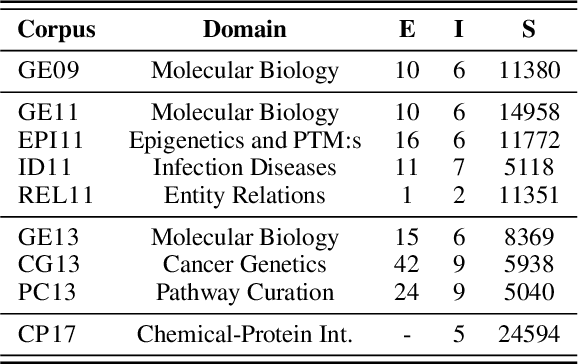

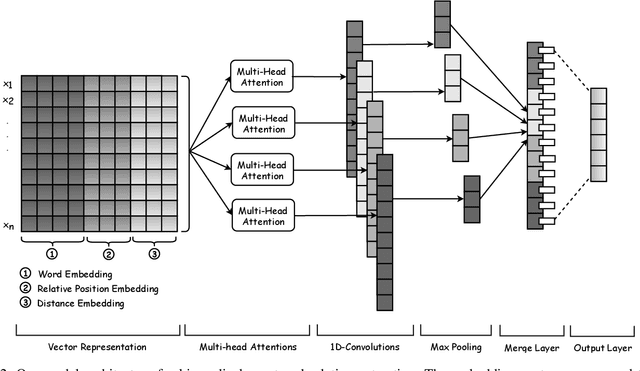

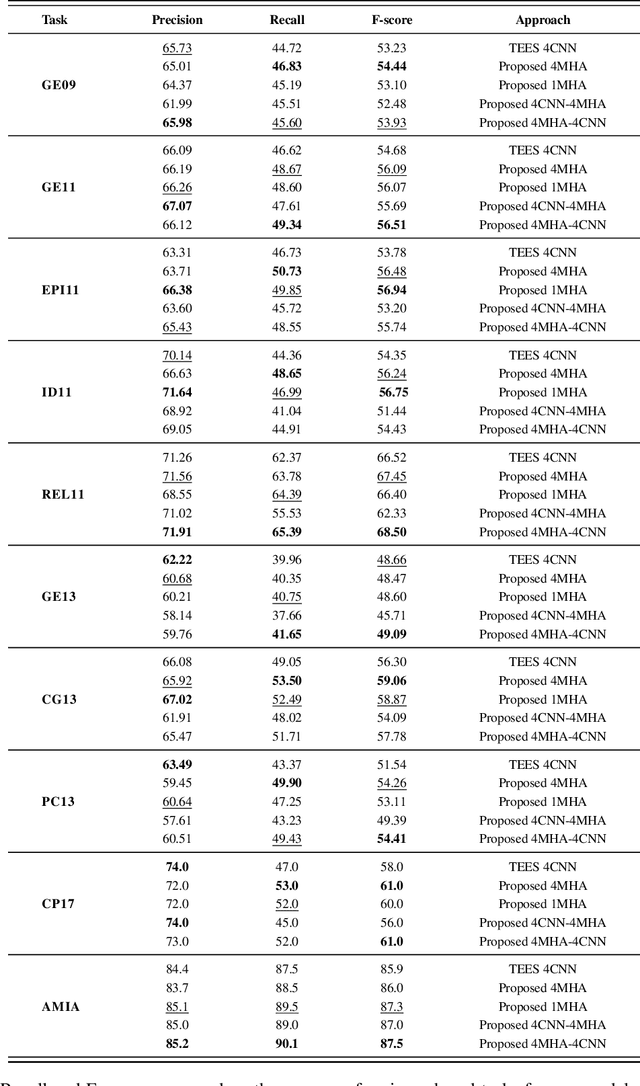

Due to the exponential growth of biomedical literature, event and relation extraction are important tasks in biomedical text mining. Most work in relation extraction detect a single entity pair mention on a short span of text, which is not ideal due to long sentences that appear in biomedical contexts. We propose an approach to both event and relation extraction, for simultaneously predicting relationships between all mention pairs in a text. Our model includes a set of multi-head attentions and convolutions, an adaptation of the transformer architecture, which offers self-attention the ability to strengthen dependencies among related elements, and models the interaction between features extracted by multiple attention heads. Experiment results demonstrate that our approach outperforms the state-of-the-art on a set of benchmark biomedical corpora including BioNLP 2009, 2011, 2013 and BioCreative 2017 shared tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge