GLINT-RU: Gated Lightweight Intelligent Recurrent Units for Sequential Recommender Systems

Paper and Code

Jun 06, 2024

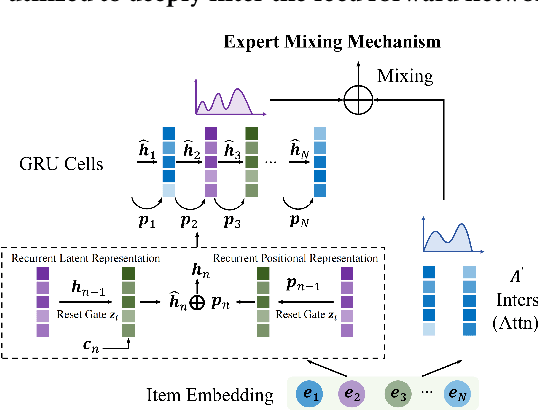

In the rapidly evolving field of artificial intelligence, transformer-based models have gained significant attention in the context of Sequential Recommender Systems (SRSs), demonstrating remarkable proficiency in capturing user-item interactions. However, such attention-based frameworks result in substantial computational overhead and extended inference time. To address this problem, this paper proposes a novel efficient sequential recommendation framework GLINT-RU that leverages dense selective Gated Recurrent Units (GRU) module to accelerate the inference speed, which is a pioneering work to further exploit the potential of efficient GRU modules in SRSs. The GRU module lies at the heart of GLINT-RU, playing a crucial role in substantially reducing both inference time and GPU memory usage. Through the integration of a dense selective gate, our framework adeptly captures both long-term and short-term item dependencies, enabling the adaptive generation of item scores. GLINT-RU further integrates a mixing block, enriching it with global user-item interaction information to bolster recommendation quality. Moreover, we design a gated Multi-layer Perceptron (MLP) for our framework where the information is deeply filtered. Extensive experiments on three datasets are conducted to highlight the effectiveness and efficiency of GLINT-RU. Our GLINT-RU achieves exceptional inference speed and prediction accuracy, outperforming existing baselines based on Recurrent Neural Network (RNN), Transformer, MLP and State Space Model (SSM). These results establish a new standard in sequential recommendation, highlighting the potential of GLINT-RU as a renewing approach in the realm of recommender systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge