Generalized Self-concordant Hessian-barrier algorithms

Paper and Code

Nov 06, 2019

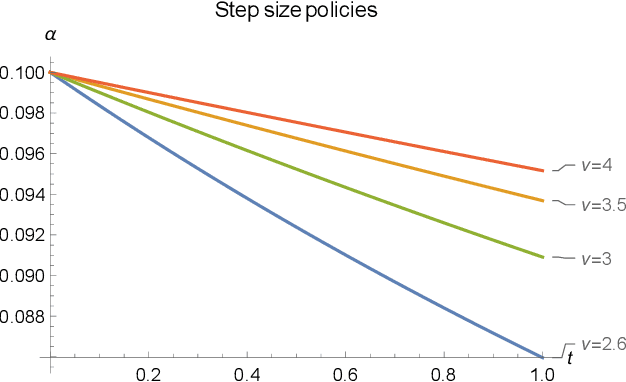

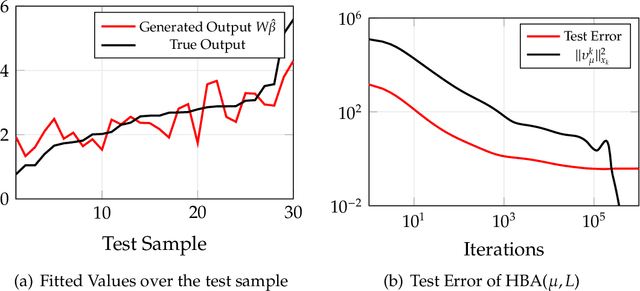

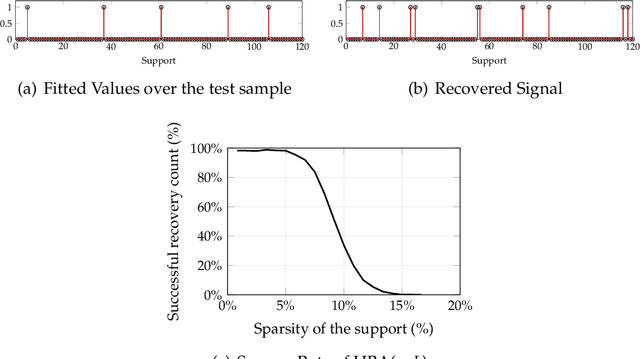

Many problems in statistical learning, imaging, and computer vision involve the optimization of a non-convex objective function with singularities at the boundary of the feasible set. For such challenging instances, we develop a new interior-point technique building on the Hessian-barrier algorithm recently introduced in Bomze, Mertikopoulos, Schachinger and Staudigl, [SIAM J. Opt. 2019 29(3), pp. 2100-2127], where the Riemannian metric is induced by a generalized self-concordant function. This class of functions is sufficiently general to include most of the commonly used barrier functions in the literature of interior point methods. We prove global convergence to an approximate stationary point of the method, and in cases where the feasible set admits an easily computable self-concordant barrier, we verify worst-case optimal iteration complexity of the method. Applications in non-convex statistical estimation and $L^{p}$-minimization are discussed to given the efficiency of the method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge