Generalization Analysis for Game-Theoretic Machine Learning

Paper and Code

Oct 09, 2014

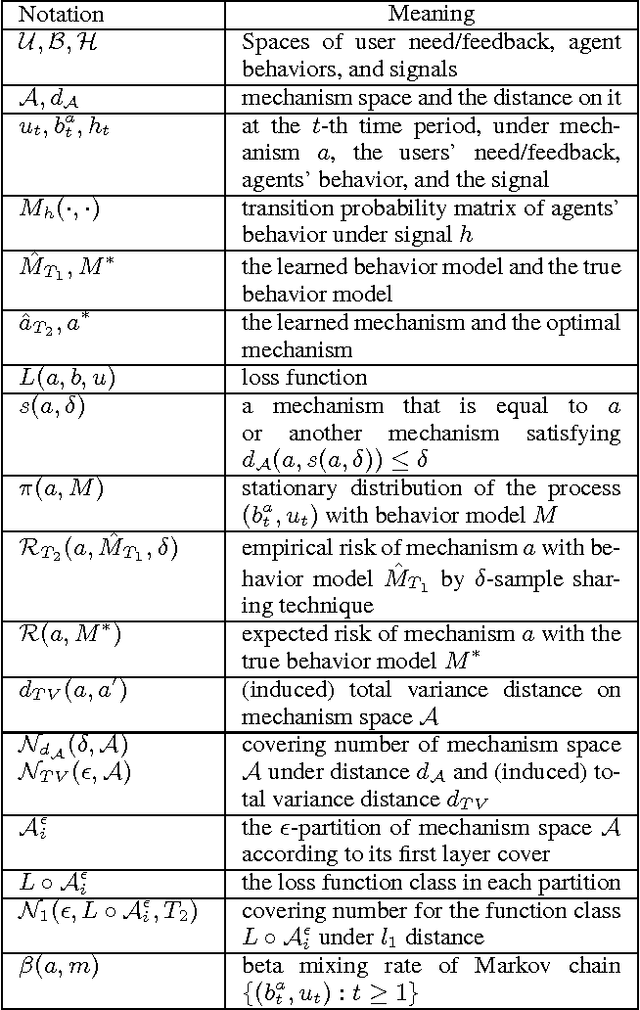

For Internet applications like sponsored search, cautions need to be taken when using machine learning to optimize their mechanisms (e.g., auction) since self-interested agents in these applications may change their behaviors (and thus the data distribution) in response to the mechanisms. To tackle this problem, a framework called game-theoretic machine learning (GTML) was recently proposed, which first learns a Markov behavior model to characterize agents' behaviors, and then learns the optimal mechanism by simulating agents' behavior changes in response to the mechanism. While GTML has demonstrated practical success, its generalization analysis is challenging because the behavior data are non-i.i.d. and dependent on the mechanism. To address this challenge, first, we decompose the generalization error for GTML into the behavior learning error and the mechanism learning error; second, for the behavior learning error, we obtain novel non-asymptotic error bounds for both parametric and non-parametric behavior learning methods; third, for the mechanism learning error, we derive a uniform convergence bound based on a new concept called nested covering number of the mechanism space and the generalization analysis techniques developed for mixing sequences. To the best of our knowledge, this is the first work on the generalization analysis of GTML, and we believe it has general implications to the theoretical analysis of other complicated machine learning problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge