Gait Library Synthesis for Quadruped Robots via Augmented Random Search

Paper and Code

Dec 30, 2019

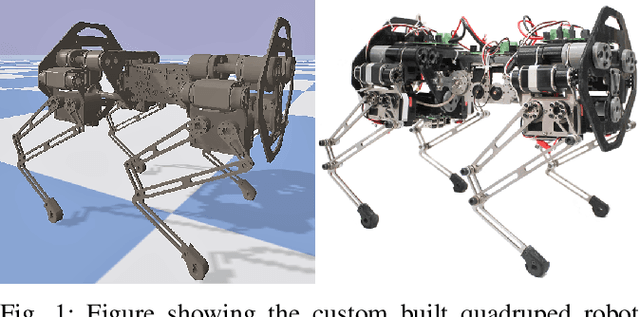

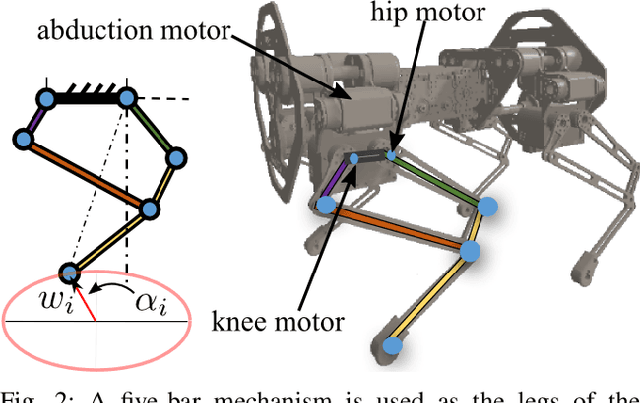

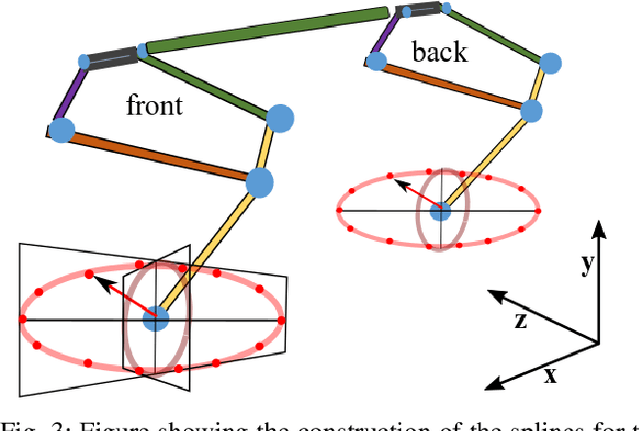

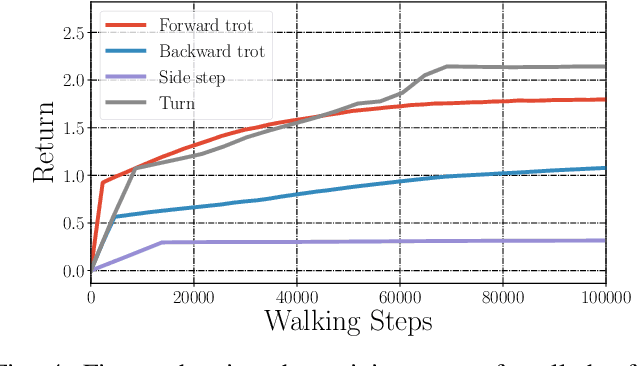

In this paper, with a view toward fast deployment of learned locomotion gaits in low-cost hardware, we generate a library of walking trajectories, namely, forward trot, backward trot, side-step, and turn in our custom-built quadruped robot, Stoch 2, using reinforcement learning. There are existing approaches that determine optimal policies for each time step, whereas we determine an optimal policy, in the form of end-foot trajectories, for each half walking step i.e., swing phase and stance phase. The way-points for the foot trajectories are obtained from a linear policy, i.e., a linear function of the states of the robot, and cubic splines are used to interpolate between these points. Augmented Random Search, a model-free and gradient-free learning algorithm is used to learn the policy in simulation. This learned policy is then deployed on hardware, yielding a trajectory in every half walking step. Different locomotion patterns are learned in simulation by enforcing a preconfigured phase shift between the trajectories of different legs. The transition from one gait to another is achieved by using a low-pass filter for the phase, and the sim-to-real transfer is improved by a linear transformation of the states obtained through regression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge