FusionMapping: Learning Depth Prediction with Monocular Images and 2D Laser Scans

Paper and Code

Nov 29, 2019

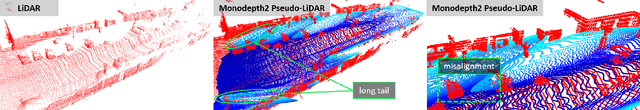

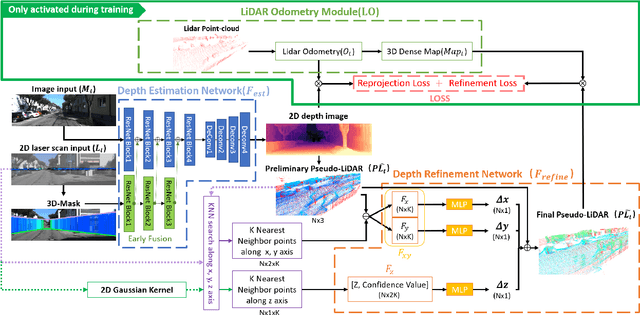

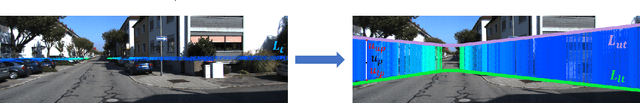

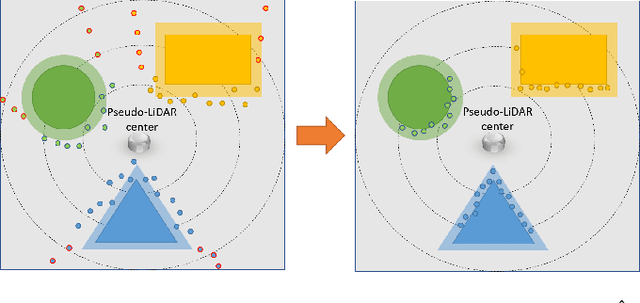

Acquiring accurate three-dimensional depth information conventionally requires expensive multibeam LiDAR devices. Recently, researchers have developed a less expensive option by predicting depth information from two-dimensional color imagery. However, there still exists a substantial gap in accuracy between depth information estimated from two-dimensional images and real LiDAR point-cloud. In this paper, we introduce a fusion-based depth prediction method, called FusionMapping. This is the first method that fuses colored imagery and two-dimensional laser scan to estimate depth in-formation. More specifically, we propose an autoencoder-based depth prediction network and a novel point-cloud refinement network for depth estimation. We analyze the performance of our FusionMapping approach on the KITTI LiDAR odometry dataset and an indoor mobile robot system. The results show that our introduced approach estimates depth with better accuracy when compared to existing methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge