Few-Shot Segmentation via Rich Prototype Generation and Recurrent Prediction Enhancement

Paper and Code

Oct 03, 2022

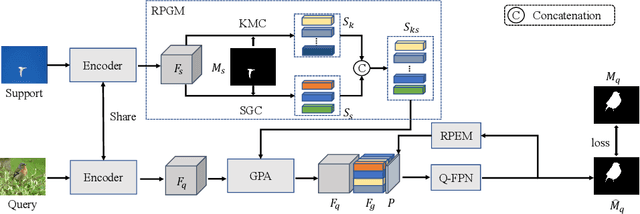

Prototype learning and decoder construction are the keys for few-shot segmentation. However, existing methods use only a single prototype generation mode, which can not cope with the intractable problem of objects with various scales. Moreover, the one-way forward propagation adopted by previous methods may cause information dilution from registered features during the decoding process. In this research, we propose a rich prototype generation module (RPGM) and a recurrent prediction enhancement module (RPEM) to reinforce the prototype learning paradigm and build a unified memory-augmented decoder for few-shot segmentation, respectively. Specifically, the RPGM combines superpixel and K-means clustering to generate rich prototype features with complementary scale relationships and adapt the scale gap between support and query images. The RPEM utilizes the recurrent mechanism to design a round-way propagation decoder. In this way, registered features can provide object-aware information continuously. Experiments show that our method consistently outperforms other competitors on two popular benchmarks PASCAL-${{5}^{i}}$ and COCO-${{20}^{i}}$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge