FedIN: Federated Intermediate Layers Learning for Model Heterogeneity

Paper and Code

Apr 12, 2023

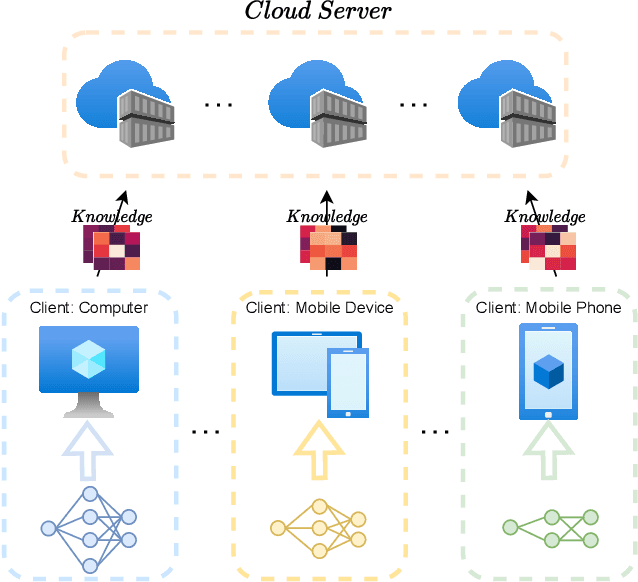

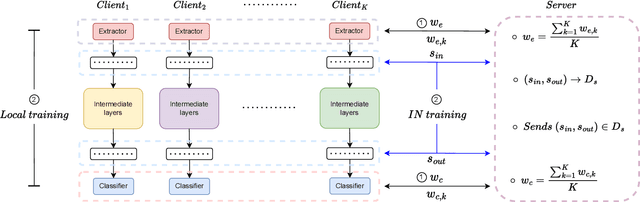

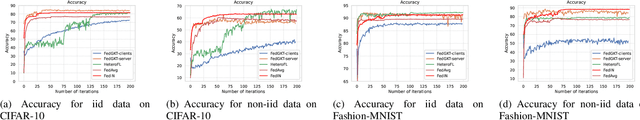

Federated learning (FL) facilitates edge devices to cooperatively train a global shared model while maintaining the training data locally and privately. However, a common but impractical assumption in FL is that the participating edge devices possess the same required resources and share identical global model architecture. In this study, we propose a novel FL method called Federated Intermediate Layers Learning (FedIN), supporting heterogeneous models without utilizing any public dataset. The training models in FedIN are divided into three parts, including an extractor, the intermediate layers, and a classifier. The model architectures of the extractor and classifier are the same in all devices to maintain the consistency of the intermediate layer features, while the architectures of the intermediate layers can vary for heterogeneous devices according to their resource capacities. To exploit the knowledge from features, we propose IN training, training the intermediate layers in line with the features from other clients. Additionally, we formulate and solve a convex optimization problem to mitigate the gradient divergence problem induced by the conflicts between the IN training and the local training. The experiment results show that FedIN achieves the best performance in the heterogeneous model environment compared with the state-of-the-art algorithms. Furthermore, our ablation study demonstrates the effectiveness of IN training and the solution to the convex optimization problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge