FederatedTrust: A Solution for Trustworthy Federated Learning

Paper and Code

Feb 20, 2023

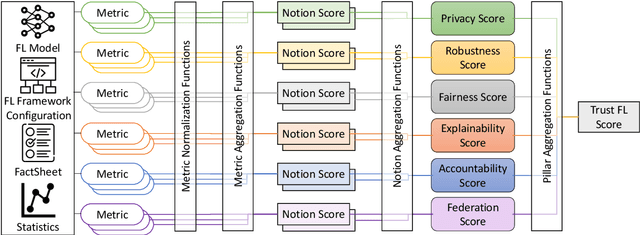

With the ever-widening spread of the Internet of Things (IoT) and Edge Computing paradigms, centralized Machine and Deep Learning (ML/DL) have become challenging due to existing distributed data silos containing sensitive information. The rising concern for data privacy is promoting the development of collaborative and privacy-preserving ML/DL techniques such as Federated Learning (FL). FL enables data privacy by design since the local data of participants are not exposed during the creation of the global and collaborative model. However, data privacy and performance are no longer sufficient, and there is a real necessity to trust model predictions. The literature has proposed some works on trustworthy ML/DL (without data privacy), where robustness, fairness, explainability, and accountability are identified as relevant pillars. However, more efforts are needed to identify trustworthiness pillars and evaluation metrics relevant to FL models and to create solutions computing the trustworthiness level of FL models. Thus, this work analyzes the existing requirements for trustworthiness evaluation in FL and proposes a comprehensive taxonomy of six pillars (privacy, robustness, fairness, explainability, accountability, and federation) with notions and more than 30 metrics for computing the trustworthiness of FL models. Then, an algorithm called FederatedTrust has been designed according to the pillars and metrics identified in the previous taxonomy to compute the trustworthiness score of FL models. A prototype of FederatedTrust has been implemented and deployed into the learning process of FederatedScope, a well-known FL framework. Finally, four experiments performed with different configurations of FederatedScope using the FEMNIST dataset under different federation configurations demonstrated the usefulness of FederatedTrust when computing the trustworthiness of FL models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge