Feasible Recourse Plan via Diverse Interpolation

Paper and Code

Feb 22, 2023

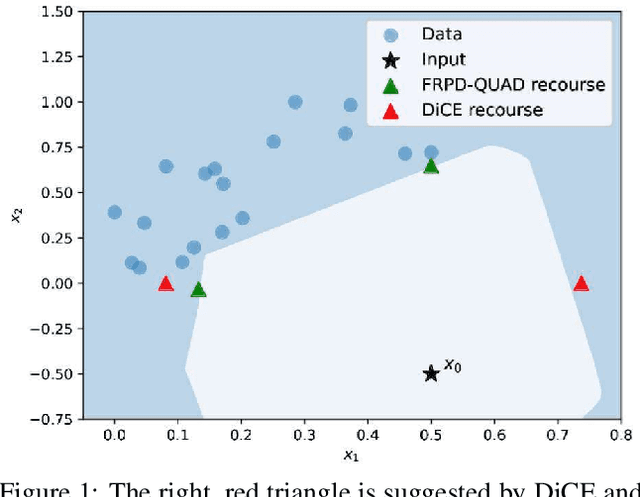

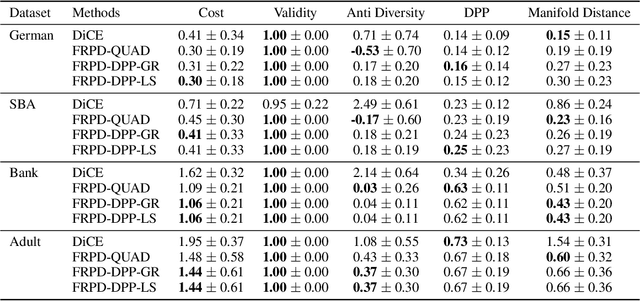

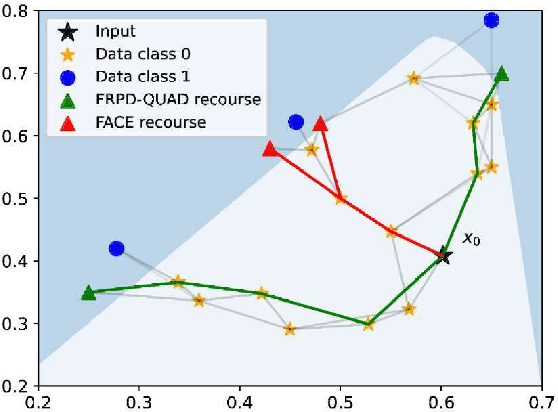

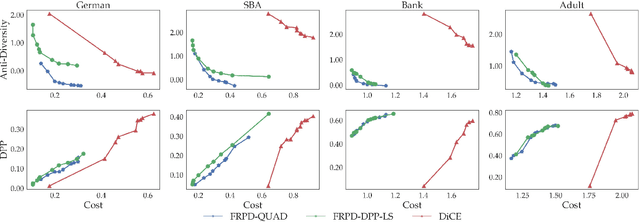

Explaining algorithmic decisions and recommending actionable feedback is increasingly important for machine learning applications. Recently, significant efforts have been invested in finding a diverse set of recourses to cover the wide spectrum of users' preferences. However, existing works often neglect the requirement that the recourses should be close to the data manifold; hence, the constructed recourses might be implausible and unsatisfying to users. To address these issues, we propose a novel approach that explicitly directs the diverse set of actionable recourses towards the data manifold. We first find a diverse set of prototypes in the favorable class that balances the trade-off between diversity and proximity. We demonstrate two specific methods to find these prototypes: either by finding the maximum a posteriori estimate of a determinantal point process or by solving a quadratic binary program. To ensure the actionability constraints, we construct an actionability graph in which the nodes represent the training samples and the edges indicate the feasible action between two instances. We then find a feasible path to each prototype, and this path demonstrates the feasible actions for each recourse in the plan. The experimental results show that our method produces a set of recourses that are close to the data manifold while delivering a better cost-diversity trade-off than existing approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge