Faster ILOD: Incremental Learning for Object Detectors based on Faster RCNN

Paper and Code

Mar 09, 2020

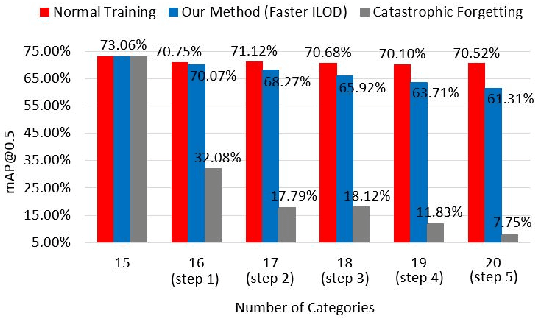

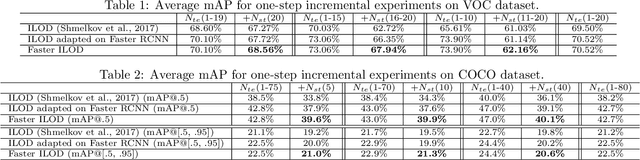

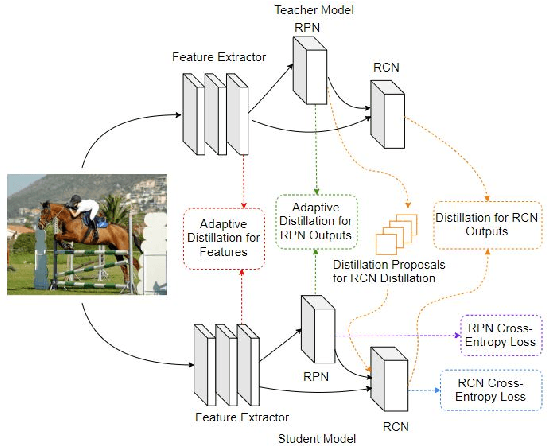

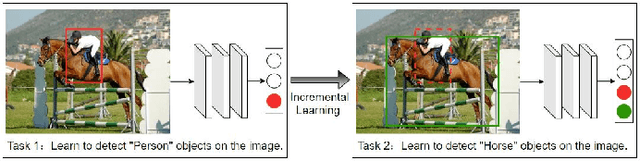

The human vision and perception system is inherently incremental where new knowledge is continually learned over time whilst existing knowledge is retained. On the other hand, deep learning networks are ill-equipped for incremental learning. When a well-trained network is adapted to new categories, its performance on the old categories will dramatically degrade. To address this problem, incremental learning methods have been explored to preserve the old knowledge of deep learning models. However, the state-of-the-art incremental object detector employs an external fixed region proposal method that increases overall computation time and reduces accuracy compared to object detectors such as Faster RCNN that use trainable Region Proposal Networks (RPNs). The purpose of this paper is to design an efficient end-to-end incremental object detector using knowledge distillation for object detectors based on RPNs. We first evaluate and analyze the performance of RPN-based detector with classic distillation towards incremental detection tasks. Then, we introduce multi-network adaptive distillation that properly retains knowledge from the old categories when fine-turning the model for new task. Experiments on the benchmark datasets, PASCAL VOC and COCO, demonstrate that the proposed incremental detector is more accurate as well as being 13 times faster than the baseline detector.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge