Fairer Software Made Easier (using "Keys")

Paper and Code

Jul 11, 2021

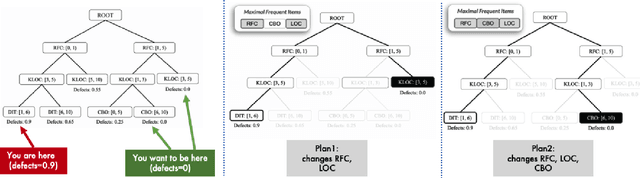

Can we simplify explanations for software analytics? Maybe. Recent results show that systems often exhibit a "keys effect"; i.e. a few key features control the rest. Just to say the obvious, for systems controlled by a few keys, explanation and control is just a matter of running a handful of "what-if" queries across the keys. By exploiting the keys effect, it should be possible to dramatically simplify even complex explanations, such as those required for ethical AI systems.

* Submitted to NIER ASE 2021 (new ideas, emerging research)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge