Extracting Qualitative Causal Structure with Transformer-Based NLP

Paper and Code

Aug 20, 2021

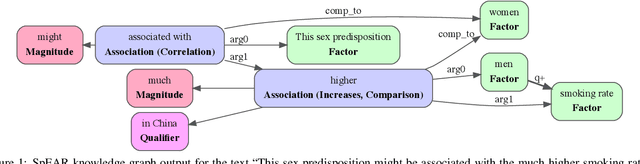

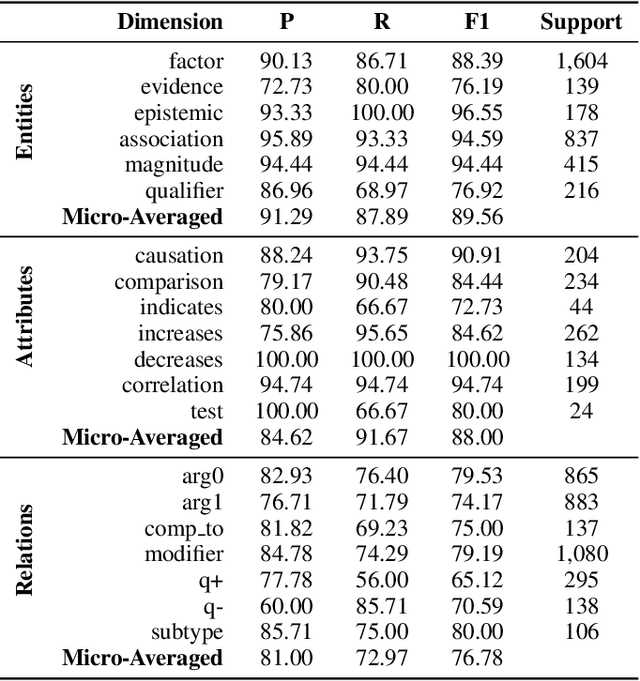

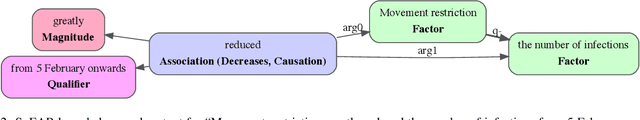

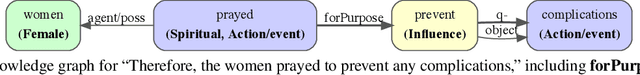

Qualitative causal relationships compactly express the direction, dependency, temporal constraints, and monotonicity constraints of discrete or continuous interactions in the world. In everyday or academic language, we may express interactions between quantities (e.g., sleep decreases stress), between discrete events or entities (e.g., a protein inhibits another protein's transcription), or between intentional or functional factors (e.g., hospital patients pray to relieve their pain). This paper presents a transformer-based NLP architecture that jointly identifies and extracts (1) variables or factors described in language, (2) qualitative causal relationships over these variables, and (3) qualifiers and magnitudes that constrain these causal relationships. We demonstrate this approach and include promising results from in two use cases, processing textual inputs from academic publications, news articles, and social media.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge