Expressivity of Deep Neural Networks

Paper and Code

Jul 09, 2020

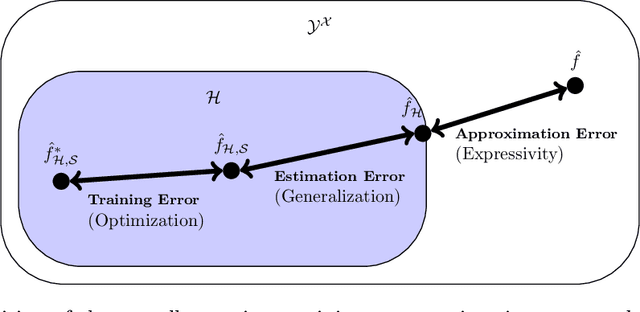

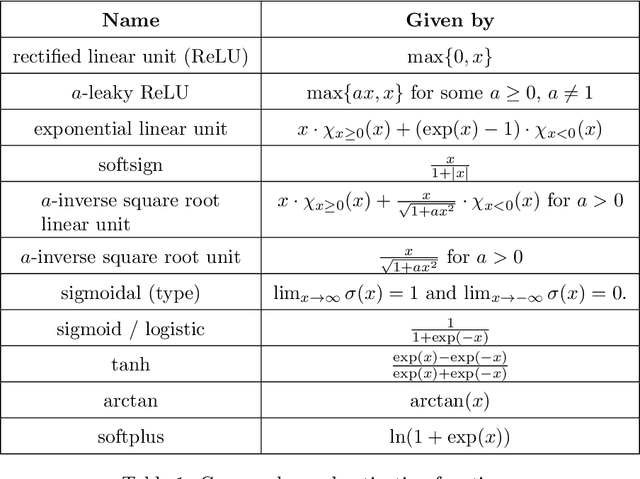

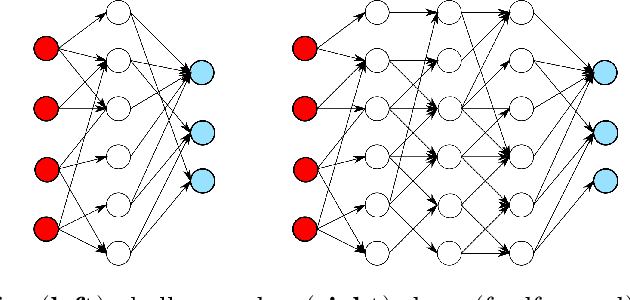

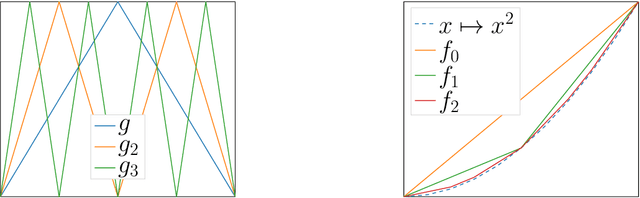

In this review paper, we give a comprehensive overview of the large variety of approximation results for neural networks. Approximation rates for classical function spaces as well as benefits of deep neural networks over shallow ones for specifically structured function classes are discussed. While the mainbody of existing results is for general feedforward architectures, we also depict approximation results for convolutional, residual and recurrent neural networks.

* This review paper will appear as a book chapter in the book "Theory

of Deep Learning" by Cambridge University Press

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge